This is the final installment of the “Beyond the Hype” series. In Part 1, we defined the vision of the “System of Intelligence.” In Part 2, we covered the “Day 1” implementation reality of data hygiene and trust.

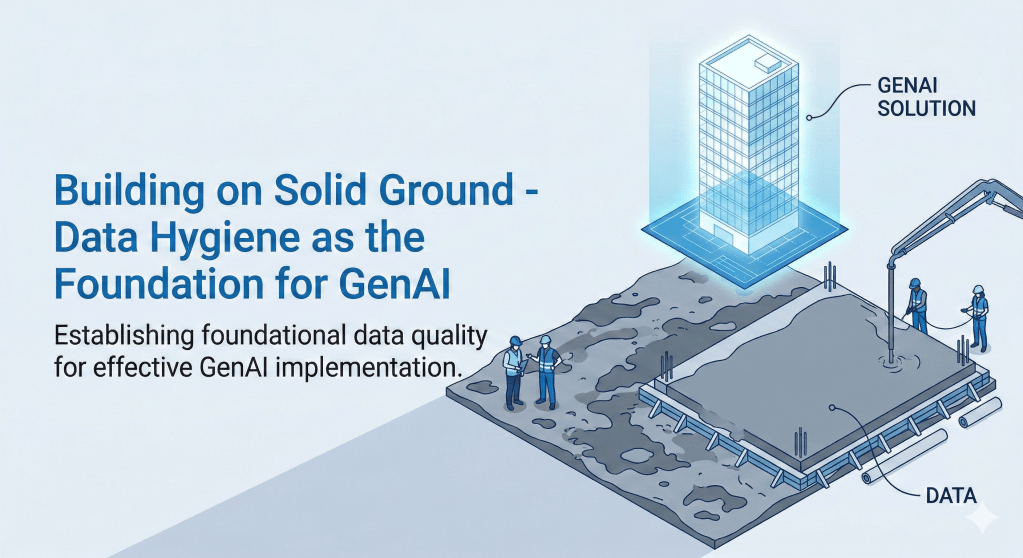

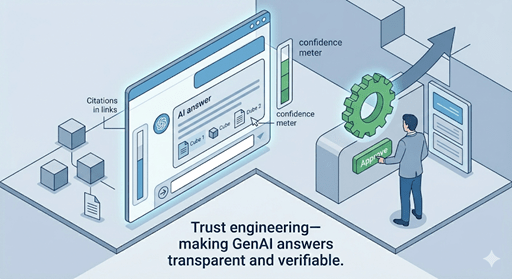

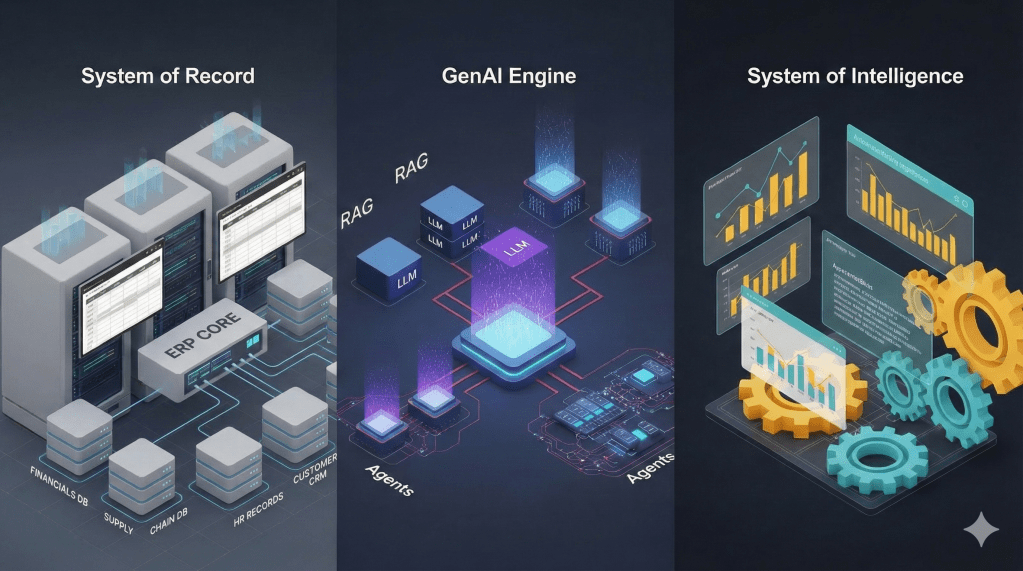

We began this Series by reimagining the ERP system and its data not as a data warehouse, but as an active partner which is a shift of viewing ERP as a “System of Record” to “System of Intelligence.” We then navigated the “Day 1” implementation challenges, the importance of prioritizing data hygiene and “Glass Box” engineering prioritizing transparency and explainability to bridge the trust gap. Now, we arrive at the most critical phase.

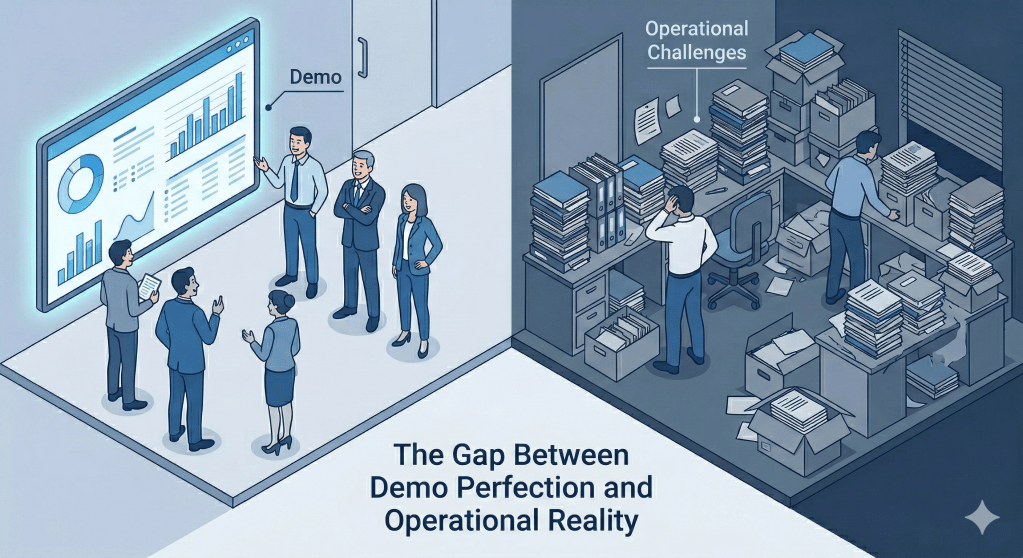

The implementation phase of a Generative AI (GenAI) project generates significant enthusiasm with a “Go-Live” celebration. The system has been deployed, the initial use cases are functioning, and the users are cautiously optimistic. However, the true challenge of an AI-augmented ERP begins the morning after deployment.

Unlike traditional software modules, which remain static until explicitly patched, GenAI agents utilize probabilistic models that interact with dynamic data. This introduces a fundamental instability: the system behavior decays without active intervention. “Day 2” operations are not merely about maintaining uptime; they are about maintaining alignment. For a GenAI-augmented ERP, uptime is necessary but insufficient. A system can be 100% available yet still be misaligned — confidently generating wrong answers, drafting obsolete contracts, or producing biased recommendations. The system must continuously be steered back toward the organization’s current business rules, data reality, and intended behavior. This is the core challenge the rest of this post addresses.

In this post, we examine the critical “Day 2” operational challenges of a GenAI-augmented ERP — the forces that cause system behavior to erode over time. We will address the concept of “Drift,” the hidden costs of AI cognition, and the governance frameworks needed to keep the system aligned with your business reality.

The New Reality of “Drift”

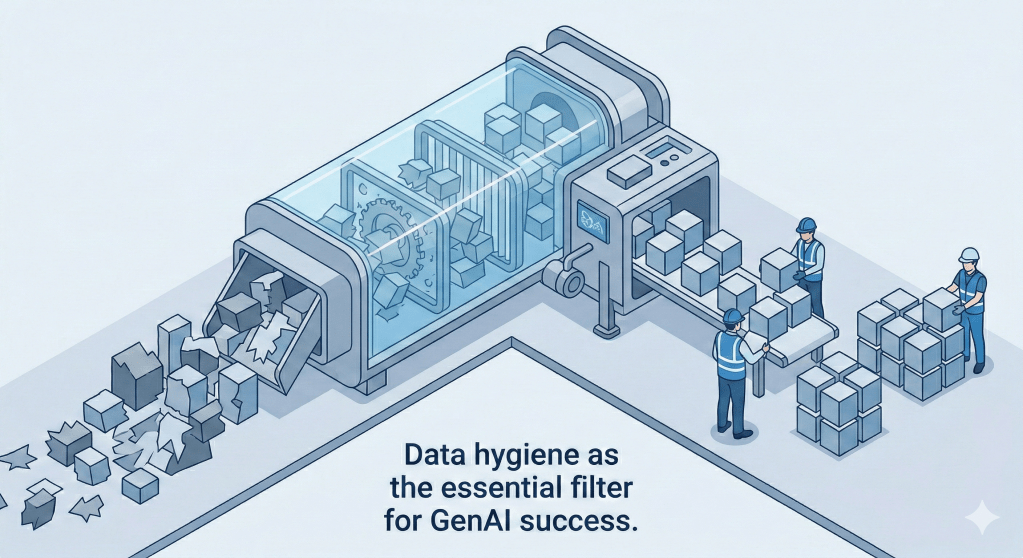

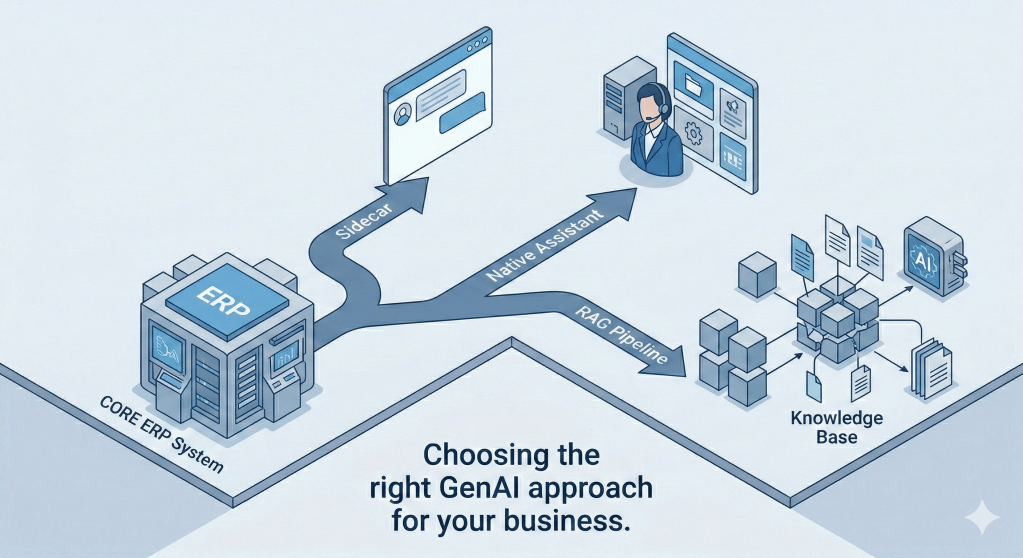

In a traditional ERP environment, a configured business rule (e.g., “PO approval limit > $5000”) remains true forever unless code is changed. In a GenAI-augmented environment, the system’s output is a function of both the context data it retrieves and uses from a RAG repository and the model it uses to interpret that data. Both variables are subject to “Drift.”

Data Drift: The Context Shift

ERP data is highly dynamic. New General Ledger (GL) accounts are created, product lines are discontinued, and vendor payment terms are renegotiated. A GenAI model prompted to “Draft a standard procurement contract” relies on the underlying data to be current. If the business logic changes (e.g., a new sustainability clause is required for all vendors), but the vector database or knowledge base is not updated, the AI will confidently generate obsolete contracts. This is Data Drift: the divergence between the model’s knowledge and the business’s reality.

Model Drift: The Behavior Shift

The underlying Large Language Models (LLMs) are also subject to updates by their providers. A model prompt that generated a concise summary in version 3.5 might produce a verbose or hallucinated response in version 4.0. This Model Drift means that even if the business data remains constant, the system’s output can change unpredictably. The “deterministic” stability of the ERP is replaced by “probabilistic” fluidity when we augment it with GenAI.

The Financial Surprise: Managing the Cost of Cognition

The operational expense (OpEx) of traditional software is generally predictable (license fees + hosting). The OpEx of a GenAI system is consumption-based and highly variable. Every interaction consumes “tokens,” and complex reasoning tasks cost significantly more than simple retrieval tasks.

Without governance, the “Cost of Cognition” can spiral out of control. A user asking the system to “Summarize the last 10 years of sales data” might trigger a massive, expensive query operation that could have been handled by a standard report.

The Solution: Tiered Architecture

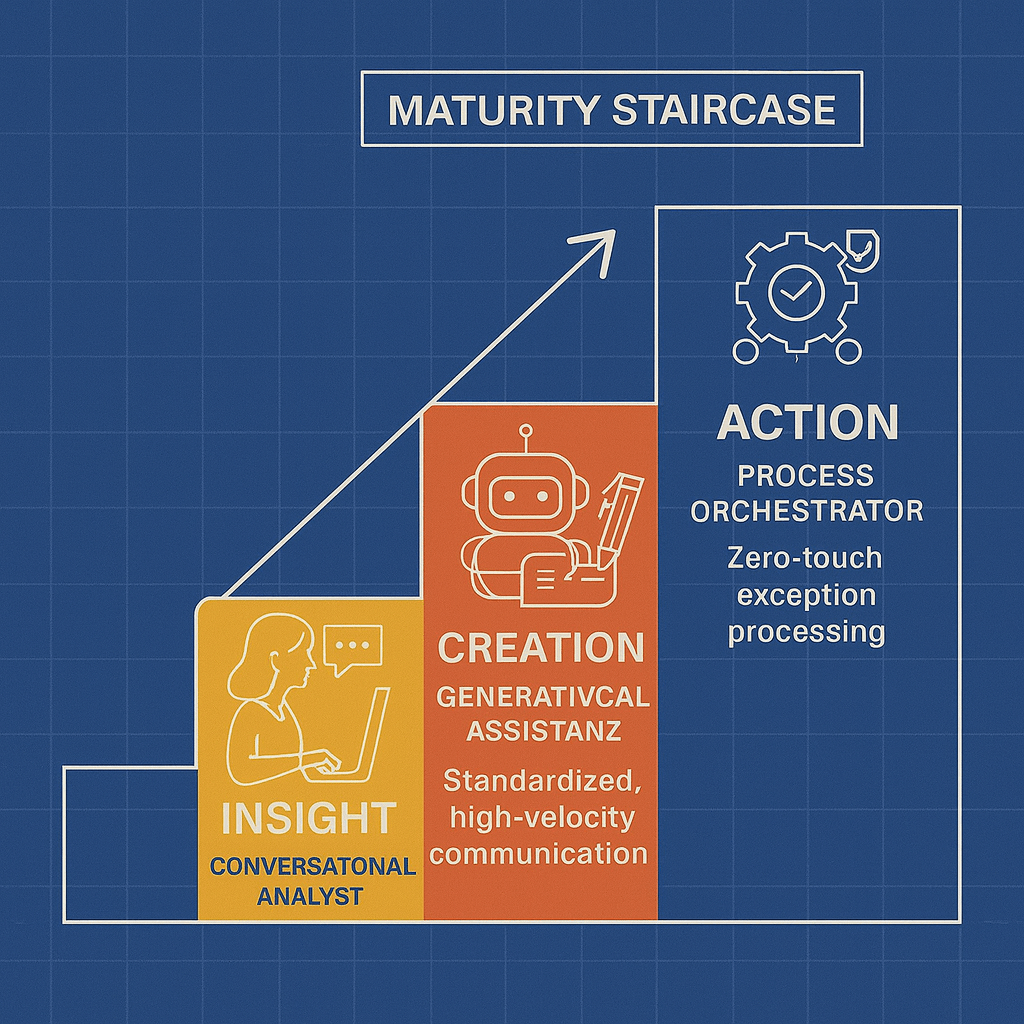

Financial governance requires a tiered approach to model selection:

- Tier 1 (Routing/Simple): Use smaller, faster, cheaper models (SLMs) for basic intent classification and simple lookups.

- Tier 2 (Complex Reasoning): Reserve powerful, expensive reasoning models (LLMs) only for complex exceptions and creative generation tasks.

This architectural decision ensures that the organization pays for intelligence only when it is actually required.

Redefining Change Management: The “Golden Set”

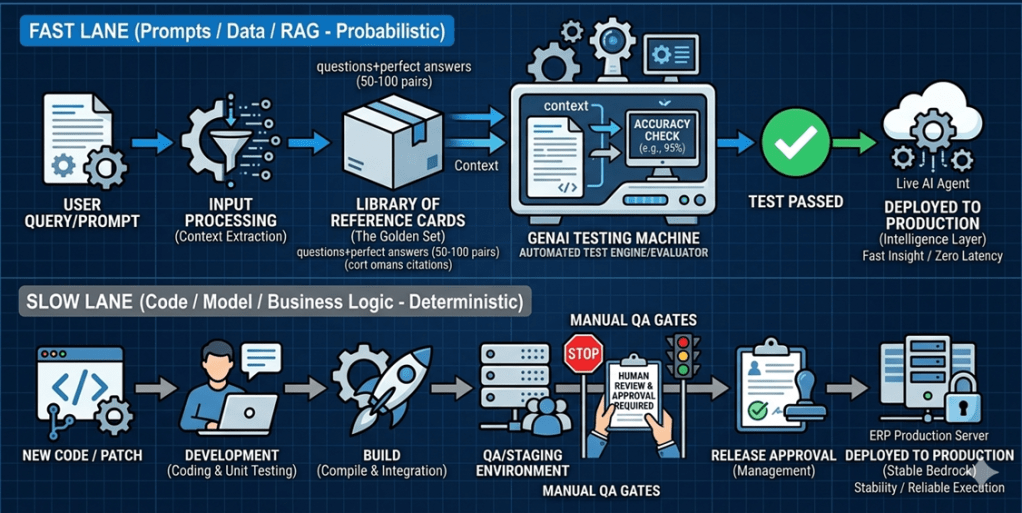

Traditional software Change Management utilizes a linear progression: Development → QA → Production. Code is written, tested for bugs, and deployed. This process is too slow and rigid for GenAI. Prompts, knowledge bases, and model parameters need to be adjusted frequently to combat drift.

The solution is a new validation methodology known as the “Golden Set.” Think of it like a standardized exam for your AI system. Just as a student’s knowledge is validated against a fixed set of correct answers before they are certified, every change to your AI system is validated against a fixed set of known-good responses before it is promoted to production. If the system “fails the exam,” the change is blocked.

The Golden Set Methodology

A “Golden Set” is a curated library of 50-100 “Question + Perfect Answer” pairs that define the expected behavior of the system.

- Reference: “What is the payment term for Vendor X?” -> “Net 30.”

- Evaluation: When a prompt is tweaked or a model is updated, the entire Golden Set is run automatically.

- Validation: The system compares the new answers against the “Perfect Answers.” If the accuracy drops below a defined threshold (e.g., 95%), the change is rejected.

This automated regression testing allows for a Two-Speed Change Process:

- Fast Lane: Prompt engineers can update instructions and knowledge bases daily, relying on the Golden Set to catch regressions.

- Slow Lane: Core code changes and architectural updates continue to follow the rigorous, slower SDLC process.

Conclusion: New Roles for a New Era

Operationalizing GenAI in the ERP requires more than new software; it requires new governance roles. The “AI Librarian” becomes essential for curating the knowledge base and ensuring data freshness. The “AI Auditor” is required to manage the Golden Sets and monitor for bias and drift.

The transition from “Day 1” (Implementation) to “Day 2” (Operations) is the moment the organization moves from unboxing a tool to mastering a discipline. The system will not sit still; the governance framework must be designed to steer it.

We at 1CloudHub have been helping enterprise customers to adopt GenAI as an augmented function to their ERP ecosystems, helping enterprises unlock tangible business and operational value. From identifying the right rollout strategies to implementing robust governance frameworks, we partner with organizations at every stage of the journey. Our approach goes beyond deployment — we embed the right processes, tools, and methodologies to combat drift, manage costs, and maintain alignment. Through structured knowledge transfer and hands-on training, we ensure that your teams are equipped to operate and evolve these solutions with confidence. The goal is not just a successful go-live, but a sustainably intelligent enterprise.