Introduction

Rethinking Enterprise Scaling in the Era of GenAI is four-part series to share my PoV on how Generative AI fundamentally changes the concept of enterprise scale — not as a technology topic, but as a strategy and operating model challenge. Each post will build upon the last, moving from foundational philosophy to practical execution to financial justification.

This is the Part 1 of 4 in the series: How GenAI Unlocks a Smarter Growth Playbook

The Assumption That is Quietly Expiring

Here is a belief so fundamental to enterprise strategy that most leaders have never had to question it:

To grow, you must add.

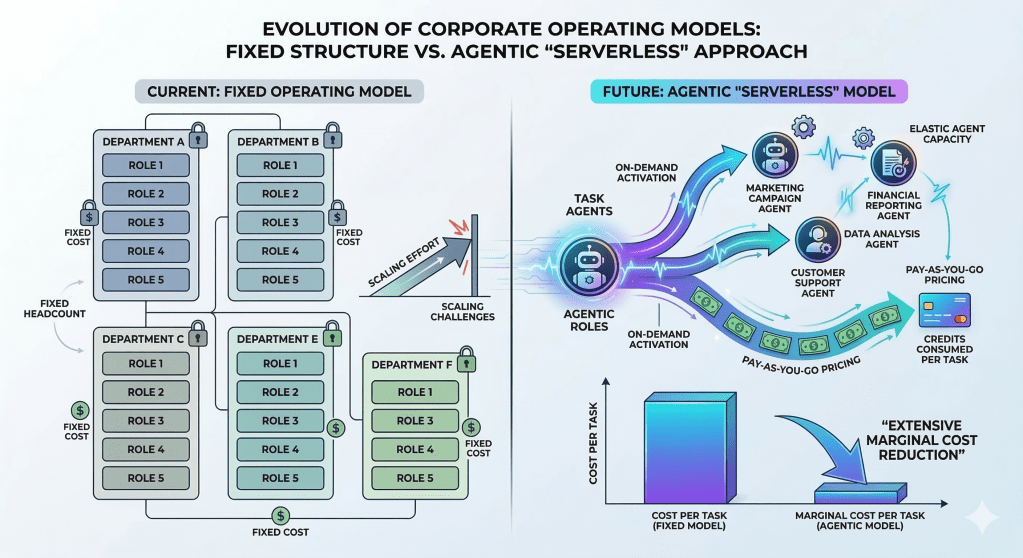

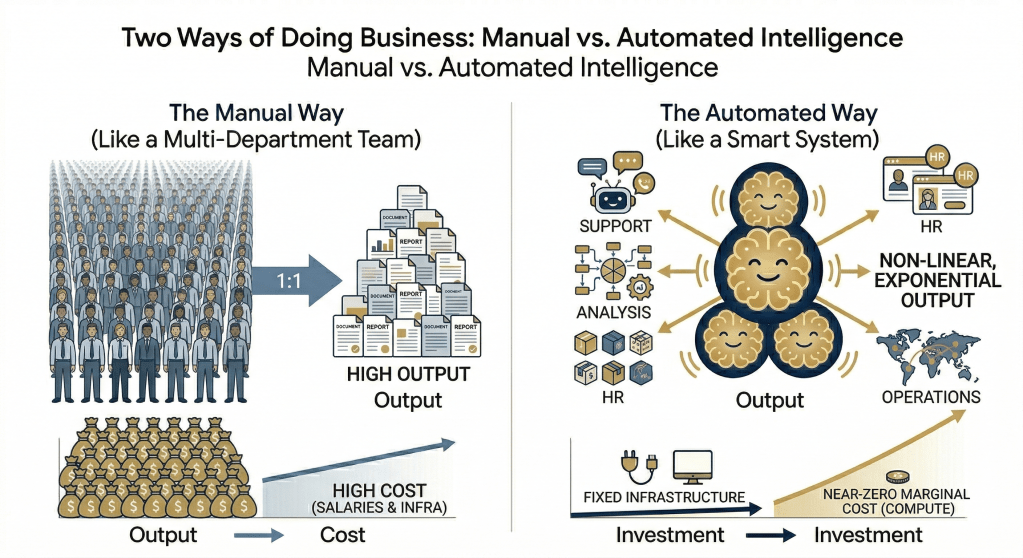

Add people to increase output. Add servers to increase throughput. Add processes to increase control. Scale, by definition, meant proportional resource expansion. The more volume you needed to handle, the more headcount you hired. The more throughput you needed, the more infrastructure you provisioned. Growth and cost moved together in a predictable, linear lockstep.

This model worked well for decades. It shaped how consulting frameworks were built, how transformation programs were designed, and how the entire discipline of enterprise architecture evolved.

GenAI is expiring this thinking.

Not gradually. Not partially. The foundational relationship between resources and output that has governed enterprise growth strategy for a generation is changing and being broken. The enterprises that continue to operate on the old assumption will find themselves scaling costs faster than they scale results compared to their competition who would adopt GenAI to scale who will be able to scale results more with marginal cost.

This post is about understanding why that shift is happening, what it means for how you think about growth, and why it demands a fundamentally new playbook.

The Old Equation: Linear Scale

The traditional enterprise scaling model can be expressed simply: Output ∝ Resources

More resources in, more output out. This wasn’t just a financial formula — it was the organizing logic of every major business function:

- Sales: Grow revenue by growing the sales team.

- Customer Operations: Handle more customers by hiring more agents.

- IT: Process more transactions by provisioning more infrastructure.

- Knowledge Work: Produce more analysis by adding more analysts.

The model had enormous merit. It was predictable. It was manageable. It gave CFOs firm ground to stand on when building growth projections. But it came with an inescapable constraint: growth required proportional investment. Every new dollar of revenue had to be earned by spending a near-equivalent dollar on resources to deliver it.

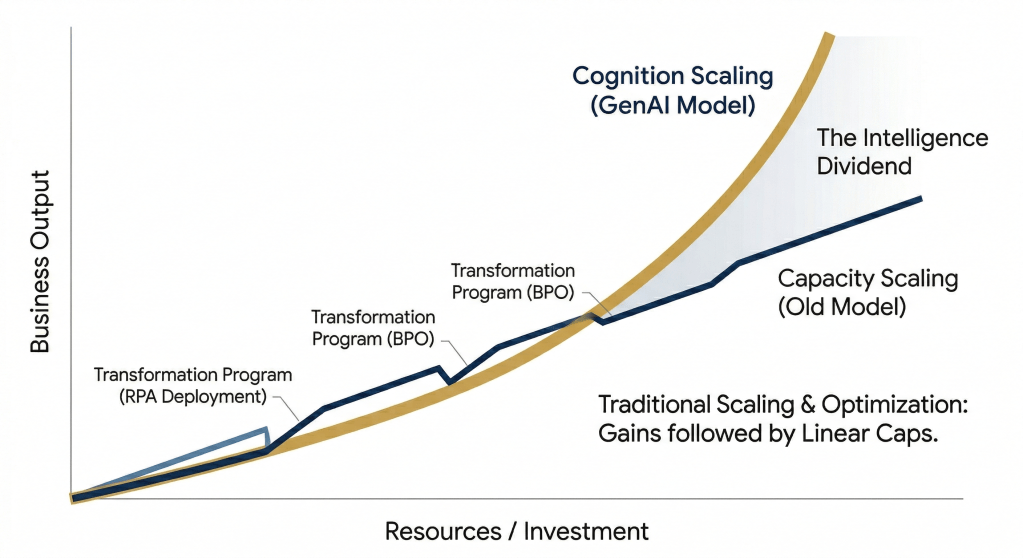

Enterprise transformation programs for the past two decades — whether ERP rollouts, CRM implementations, cloud migrations, or RPA deployments — were primarily optimizations within this linear model. They made the slope more efficient. They reduced the cost per unit of output. But they didn’t break the fundamental relationship. The line remained linear. It just got steeper.

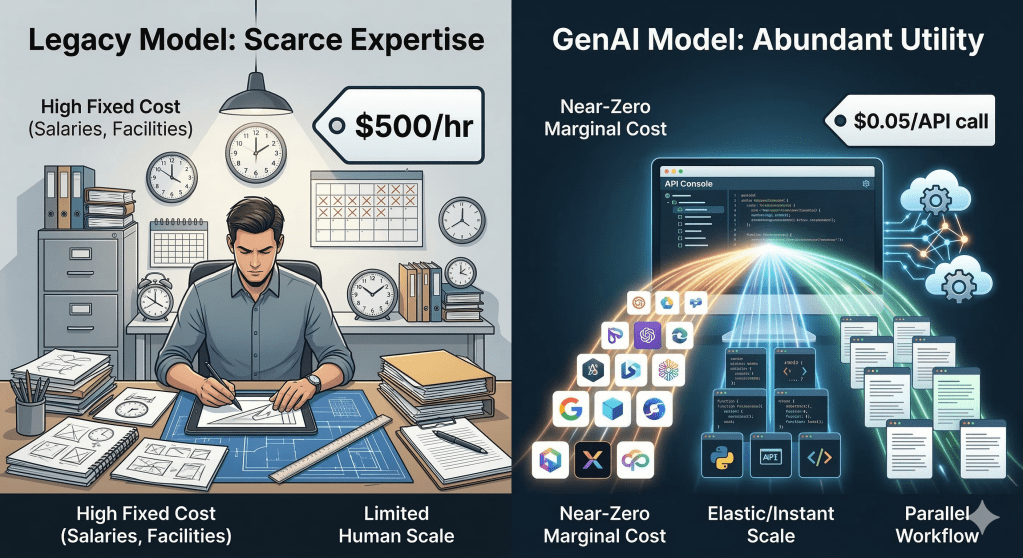

GenAI doesn’t optimize the slope. It changes the shape of the curve. Below images shows that difference between the old and the new model of scalling.

The New Equation: Cognition Scaling

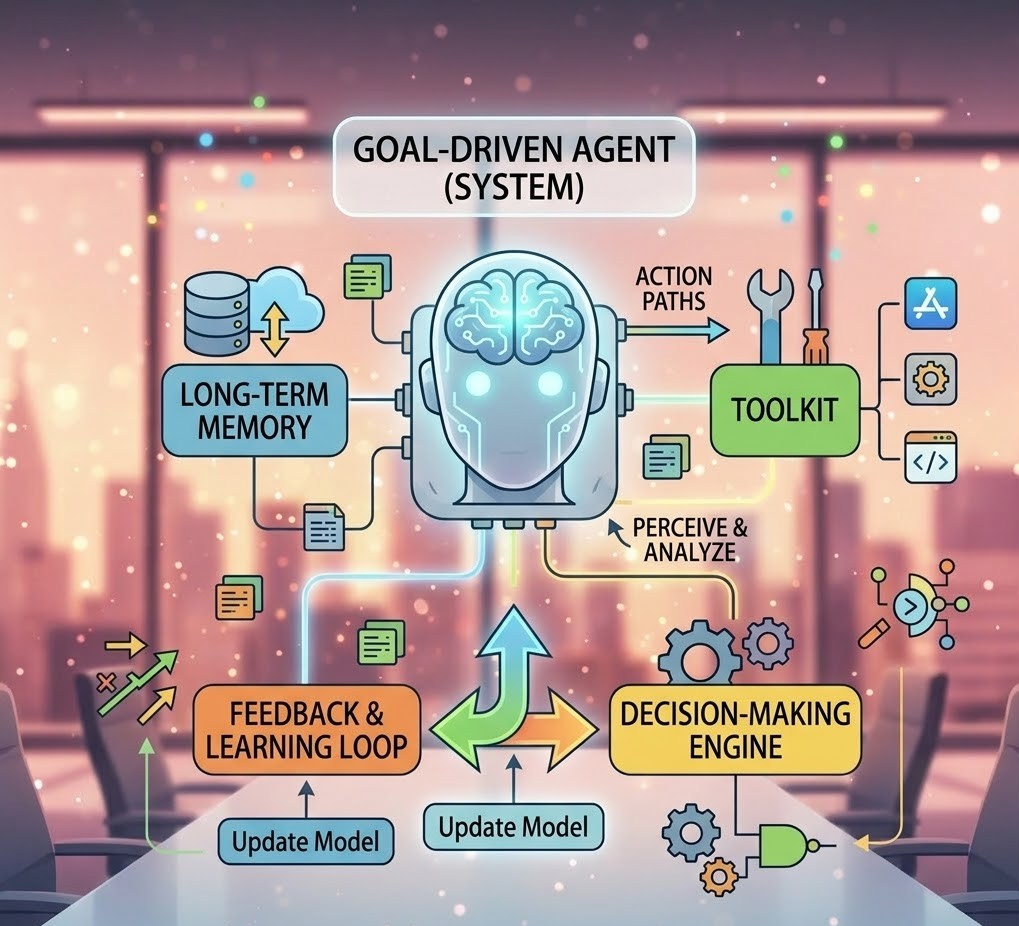

With GenAI, a more accurate equation emerges: Output ∝ Intelligence × Automation × Context

A single AI agent trained on an enterprise knowledge base can handle thousands of customer support interactions simultaneously — interactions that would previously have required a proportional team. A language model deployed in a sales workflow can draft proposals, surface competitive insights, and personalize outreach at a volume normal human team could not match. An AI-assisted IT operations platform can detect anomalies, trace root causes, and trigger remediation without the requirement for a human required to act on a ticket.

The scaling variable is no longer manpower or infrastructure capacity. It is intelligence — and intelligence, once built, can be replicated at near-zero marginal cost.

This is the shift from capacity scaling to cognition scaling. And it is not incremental. It is structural.

| Dimension | Capacity Scaling (Old) | Cognition Scaling (New) |

|---|---|---|

| Growth driver | Resources added | Intelligence applied |

| Cost behavior | Linear with output | Near-fixed once deployed |

| Bottleneck | Headcount / Infra | Orchestration / Governance |

| Competitive advantage | Operational efficiency | Speed and adaptability |

| Output ceiling | Bounded by budget | Bounded by design quality |

The implications extend far beyond cost. When intelligence can be replicated, expertise is no longer scarce or localized. A single senior engineer’s knowledge, codified into an AI agent, can resolve 70% of support tickets without that engineer being present. A handful of specialist analysts, augmented by AI, can deliver insights across an entire enterprise that previously required a department. The Key thing to understand is, You don’t just scale headcount — you scale expertise distribution.

The Evolution of the Traditional PPT Model — and GenAI Is Leading the Way

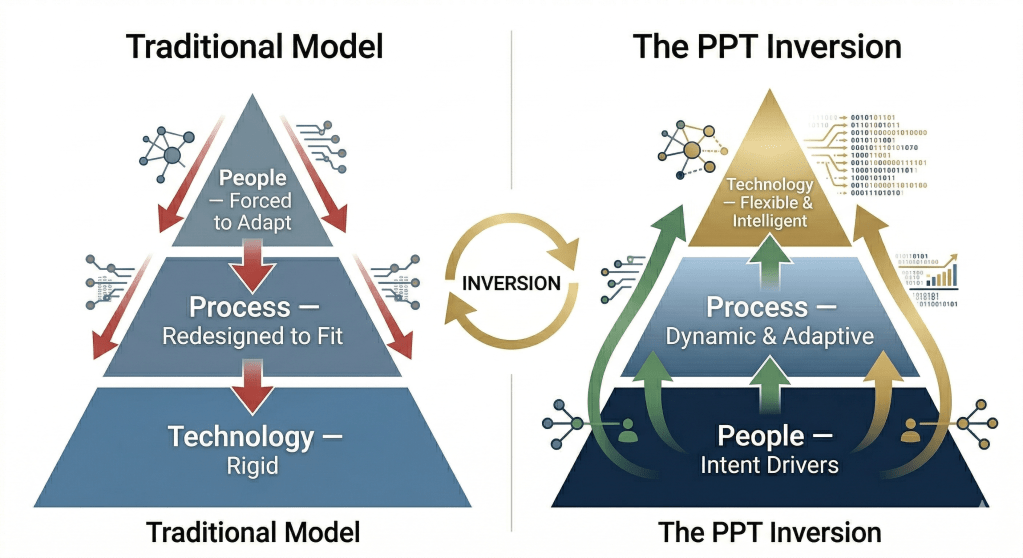

To understand the full magnitude of this shift, it helps to go back to a framework that every enterprise consultant has lived by: People, Process, Technology — the PPT model.

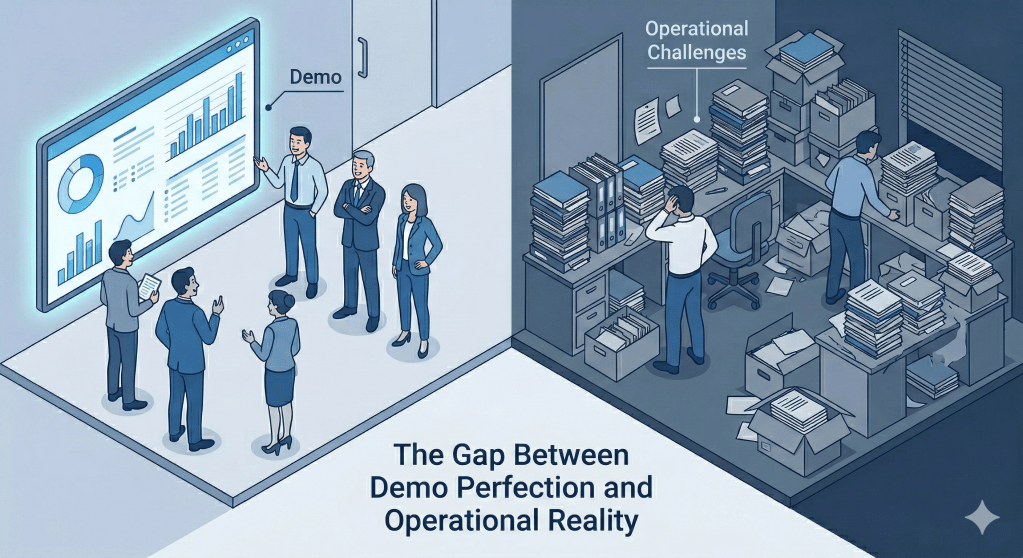

The PPT model positioned transformation as a balanced act across three dimensions. In theory, people, process, and technology were equal pillars. In practice, something very different happened.

Because enterprise technologies like — ERP systems, CRM platforms, BPM engines — was inherently rigid, it was almost always the constraint that everything else had to adapt to. Projects followed a predictable, uncomfortable pattern:

- Select the platform

- Reengineer processes to fit the platform’s workflow logic

- Retrain people to comply with the new processes

The human and process layers were routinely force-fitted to the technology. The phrase “change management” became a polite euphemism for pushing an organization through the friction of adapting to a system it didn’t design. Billions in transformation budgets were spent not on genuine capability improvement or drive major improvements to outputs, but on organizational adaptation to rigid software that some times helped only to marginal improve outputs.

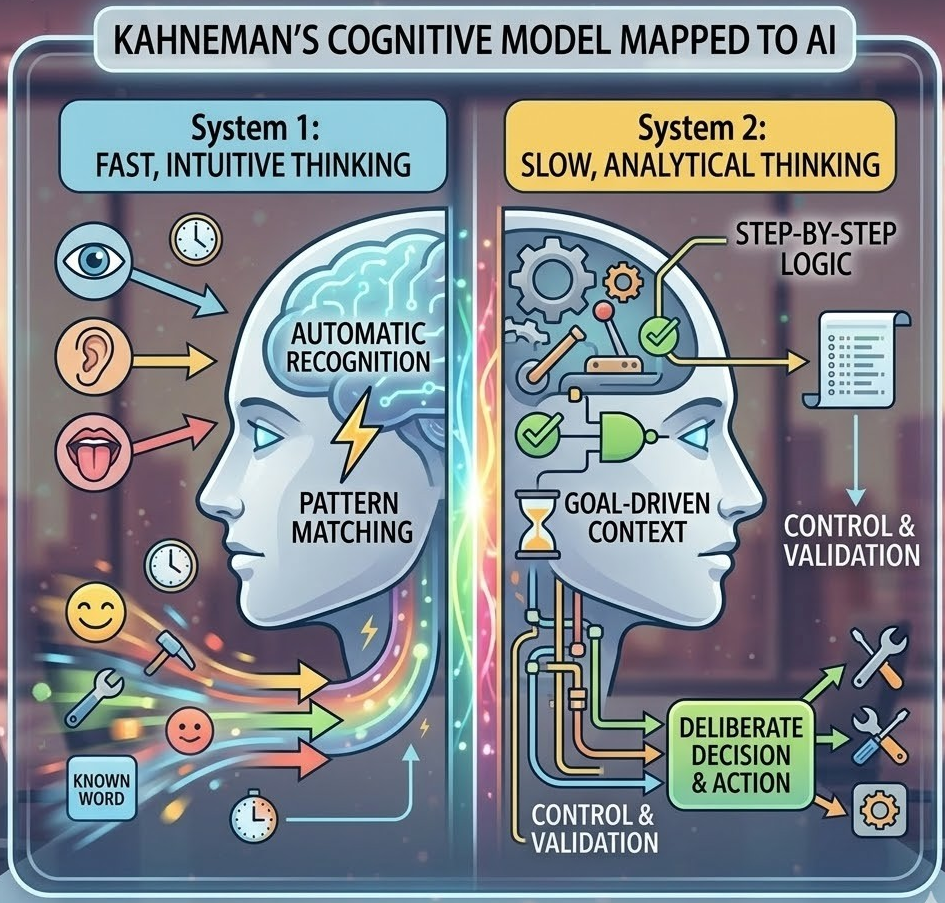

GenAI introduces something the PPT model was never built to accommodate: adaptive technology.

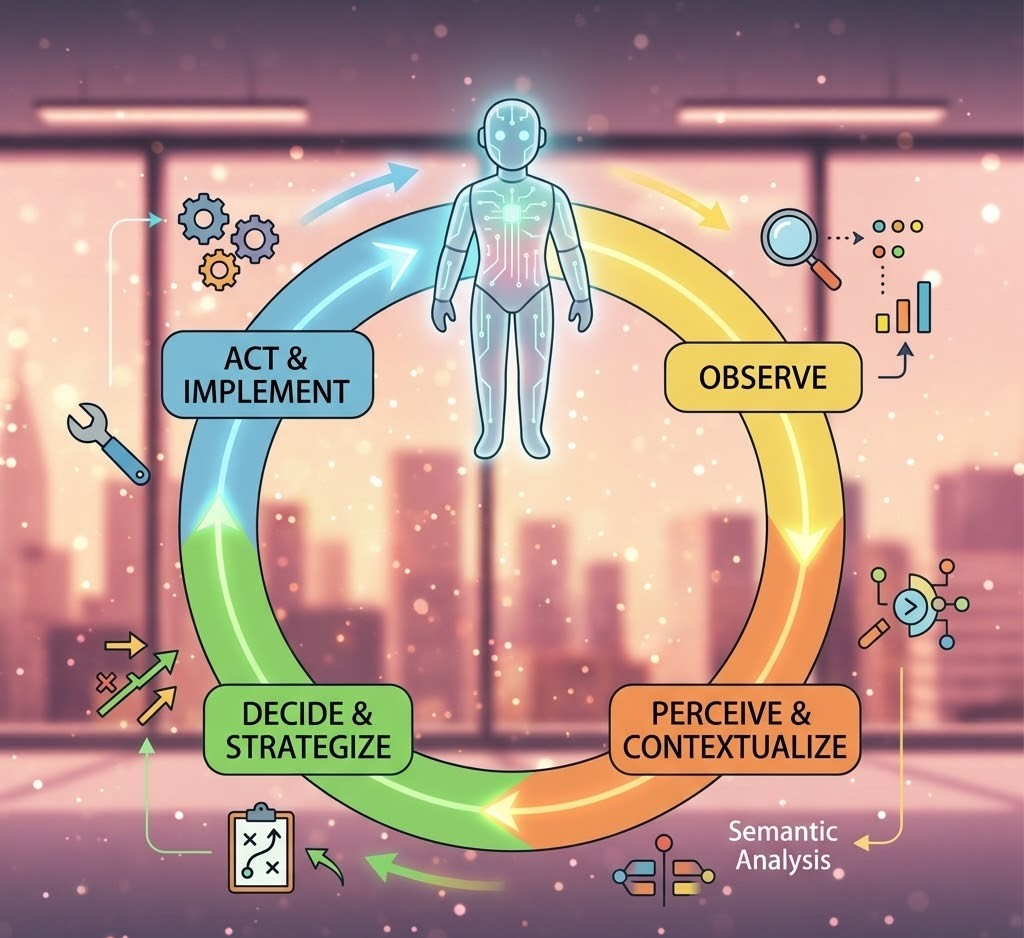

For the first time in enterprise history, technology is no longer the rigid layer that everything else must bend around. Consider what this means in practice: a traditional ERP system required a purchase order to follow a fixed sequence of steps — approve, validate, route, post — regardless of context. A GenAI system can read an email from a supplier, understand that it contains an urgent pricing exception, and trigger the right escalation path without anyone defining that path in advance. It responds to intent — what the business is trying to achieve — rather than instruction — a pre-coded sequence of steps it must be told to follow. It adapts to how your organization actually communicates, rather than forcing your organization to communicate in ways the system can parse. And because it learns from interaction over time, the system improves through use rather than requiring a formal change request every time a process needs to evolve.

This is what we call the PPT Inversion:

In the traditional PPT model, technology was always the immovable anchor. Systems were purchased, installed, and then the organization was reshaped around them — processes rewritten, people retrained, workflows contorted to match what the software could accommodate. The technology constrained everything above it.

The PPT Inversion describes what happens when that relationship flips. GenAI is the first enterprise technology that can genuinely adapt to the organization, rather than requiring the organization to adapt to it. People become the drivers of intent — defining what outcomes matter. Technology becomes the most flexible layer — figuring out how to achieve them. Process becomes dynamic — emerging and evolving between the two, rather than being prescribed in advance.

| Old Model | The PPT Inversion |

|---|---|

| Technology is fixed; people adapt | Technology adapts; people drive intent |

| Processes are redesigned to fit systems | Systems generate and evolve processes |

| Change management = retraining to comply | Change management = redefining roles |

| “Fit the organization into the system” | “System shapes itself around the organization” |

The PPT Inversion doesn’t eliminate the three pillars. People, process, and technology remain essential. But their roles are redistributed:

- People move from system users and process executors to intent drivers and supervisors of AI.

- Processes move from rigid, pre-designed blueprints to dynamic, adaptive intelligence flows.

- Technology moves from a system of execution to a system of cognition — the most flexible layer, not the most constraining one.

For the first time in enterprise history, technology is no longer the rigid layer — it is becoming the most flexible layer.

This is not a minor philosophical update. It fundamentally changes how enterprises should think about transformation. The question shifts from “How do we get our people to adapt to this new system?” to “How do we design intelligence that amplifies how our people naturally work?”

What Stayed the Same — and Why That Matters

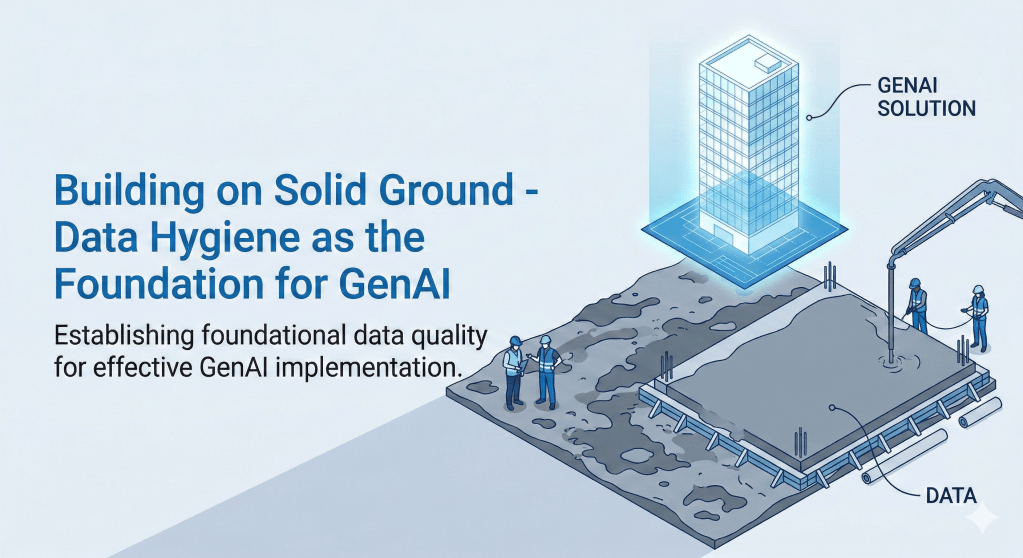

It would be easy to get carried away by the hype and to overstate the case. GenAI is transformative, but it is not unconditional.

Two critical things have not changed:

First, the precision requirement. Non-linear scale amplifies both the upside and the exposure. When an AI agent operates across thousands of simultaneous interactions, the blast radius of a logic error shifts from a single transaction to an entire workflow. Hence it is critical to ensure Governance, guardrails, and observability are not optional additions to a GenAI strategy. They are load-bearing infrastructure. The intelligent operating models we explore throughout this series are built on the assumption that these foundations are in place. (Governance architecture is a dedicated topic — one we’ll address separately in this series.)

Second, the complexity of orchestration. The new bottleneck in cognition scaling is not capacity — it’s orchestration. Coordinating multiple AI agents, managing shared context, aligning tool usage with business outcomes, and maintaining consistency across intelligence pipelines requires sophisticated design. Enterprises that invest only in AI models and deploying AI Agents without investing in orchestration will find themselves with powerful tools they cannot reliably harness.

Scaling intelligence requires stronger control systems than scaling infrastructure.

The opportunity is real. But so is the design challenge.

A Preview of What is Coming Next

This shift in the scaling paradigm shared in this post is just the foundation. It has a cascading impact that flow through every dimension of enterprise operations that demands the enterprises to carry out new way of thinking.

The series ahead maps four of those dimensions in the next set of posts:

Post 2 — The Operating Model: When the growth equation changes, the enterprise operating model has to change with it. We’ll map how GenAI transforms five layers of enterprise operations — from leadership decision-making to frontline execution — and why the future operating model is built on intelligence loops, not process pipelines.

Post 3 — The Execution Strategy: Non-linear scale does not mean uniform automation. Enterprises need a practical framework for deciding where to apply full AI autonomy, where to deploy AI as an augmentation layer, and where to keep humans firmly in control. Post 3 introduces the GenAI Operating Spectrum — three modes, one decision framework.

Post 4 — The Financial Case: None of this matters unless it translates to business outcomes. Post 4 addresses the CFO conversation directly — mapping each dimension of the framework to measurable financial levers, and introducing a three-layer ROI model that captures the full value of intelligence investment, not just the efficiency gains that most cost-benefit analyses miss.

The Question Every Enterprise Leader Should Ask Today

The old growth playbook assumed that scale was a capacity problem. Buy more. Hire more. Build more.

GenAI reframes it as a cognition problem. Design better. Orchestrate smarter. Deploy intelligence where it creates the most leverage.

The enterprises that recognize this shift now will have a structural advantage that compounds over time. Those that optimize their existing linear model — however elegantly — will find their competitors who are levaraging on GenAI reaching entirely different points on a different curve.

The question is not whether your organization will adopt GenAI. The question is whether you will adopt it as a tactical tool, or as a new architecture for scale.

There is a significant difference between those two answers — and the choice enterprises make now will define their growth ceiling for the next decade.

This is Post 1 of 4 in the series “Scaling in Enterprises in the Era of GenAI.” Post 2 — “Rewiring the Enterprise: How GenAI Transforms Your Operating Model End-to-End” — explores how the five layers of enterprise operations must be redesigned when intelligence, not process, becomes the organizing principle.