Introduction: What Was Missing

Quick recap (from Part 1): We saw that LLMs are very good at understanding meaning (semantics) and even reasoning step‑by‑step, but they still don’t decide or act on their own.

With that context in mind, this part continues the story by asking the next natural question: if understanding and reasoning aren’t enough, what actually enables intelligent behavior?

In Part 1, I shared understanding and reasoning alone do not decide or act. That realization naturally raised a follow-up question — if reasoning isn’t enough, what actually enables intelligent behavior?

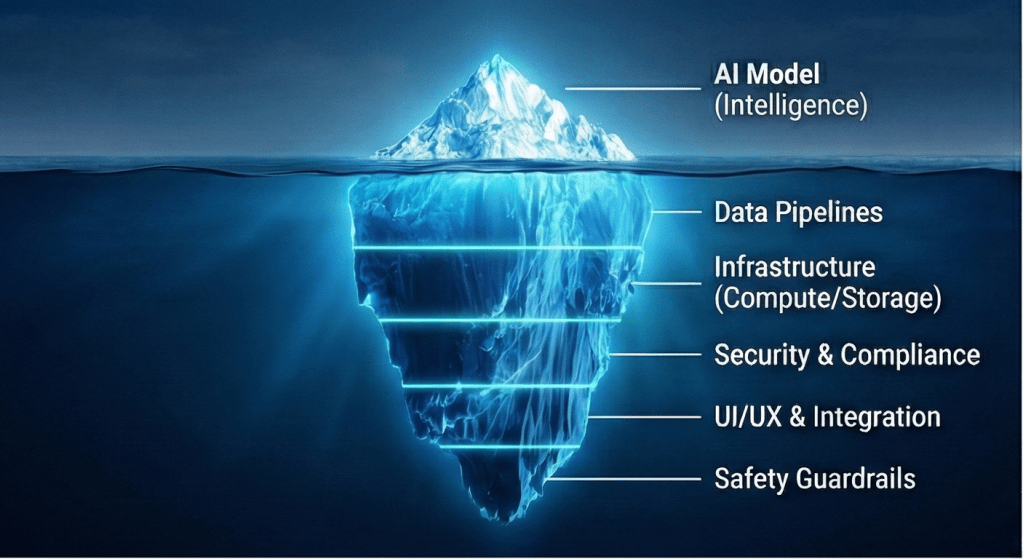

In this post I wanted to share about what I learnt about the missing layer: cognitive capability, and how AI agents introduce it through architecture rather than model intelligence.

Cognitive Capability (Knowing What to Do Next)

This question brought me to the concept of cognitive capability — in simple terms, the ability of a system to decide and act, not just explain or understand.

Unlike semantic understanding or reasoning, cognitive capability is not about explaining information — it is about using information.

In simple terms:

It answers the question: “What should I do with this information?”

Cognitive capability includes:

- Setting goals

- Making decisions

- Taking actions

- Learning from results

Humans do this seamlessly, often without realizing it.

AI systems, however, do not gain this capability just by becoming better at language or reasoning. They require a different kind of design.

This distinction made the gap between humans and AI much clearer — and it naturally pointed to the concept of agents as the missing architectural layer.

AI Agents (Adding a Brain Around the Model)

Once cognition became the focus, AI agents entered the picture naturally. At this point in my thinking, agents stopped feeling like a buzzword and started feeling like a design necessity.

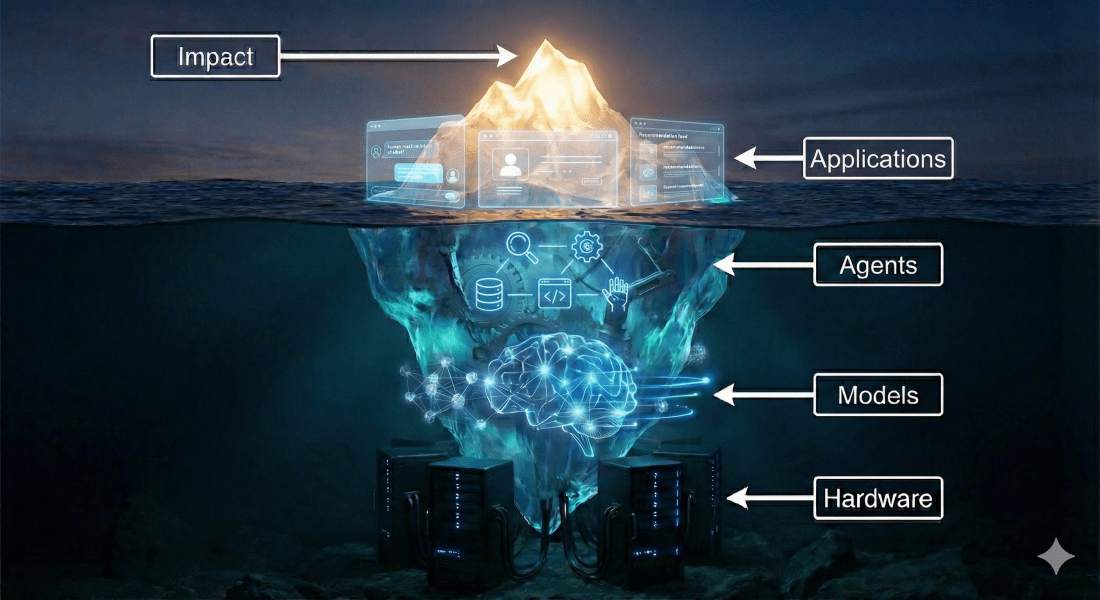

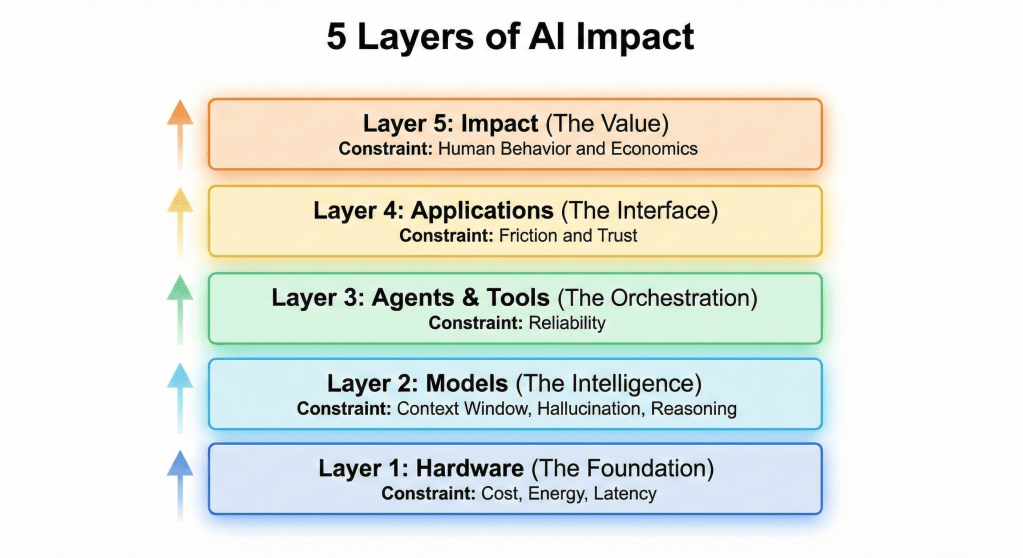

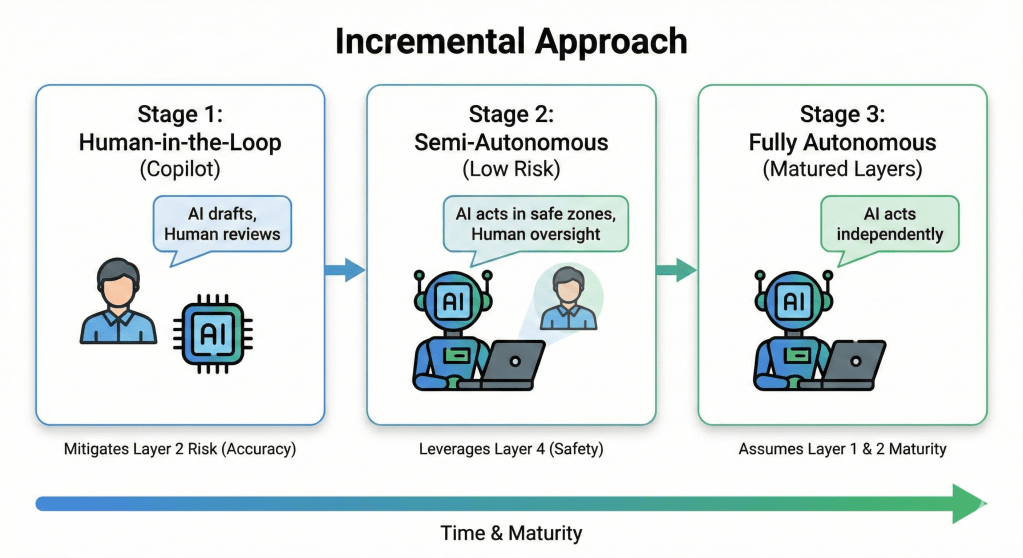

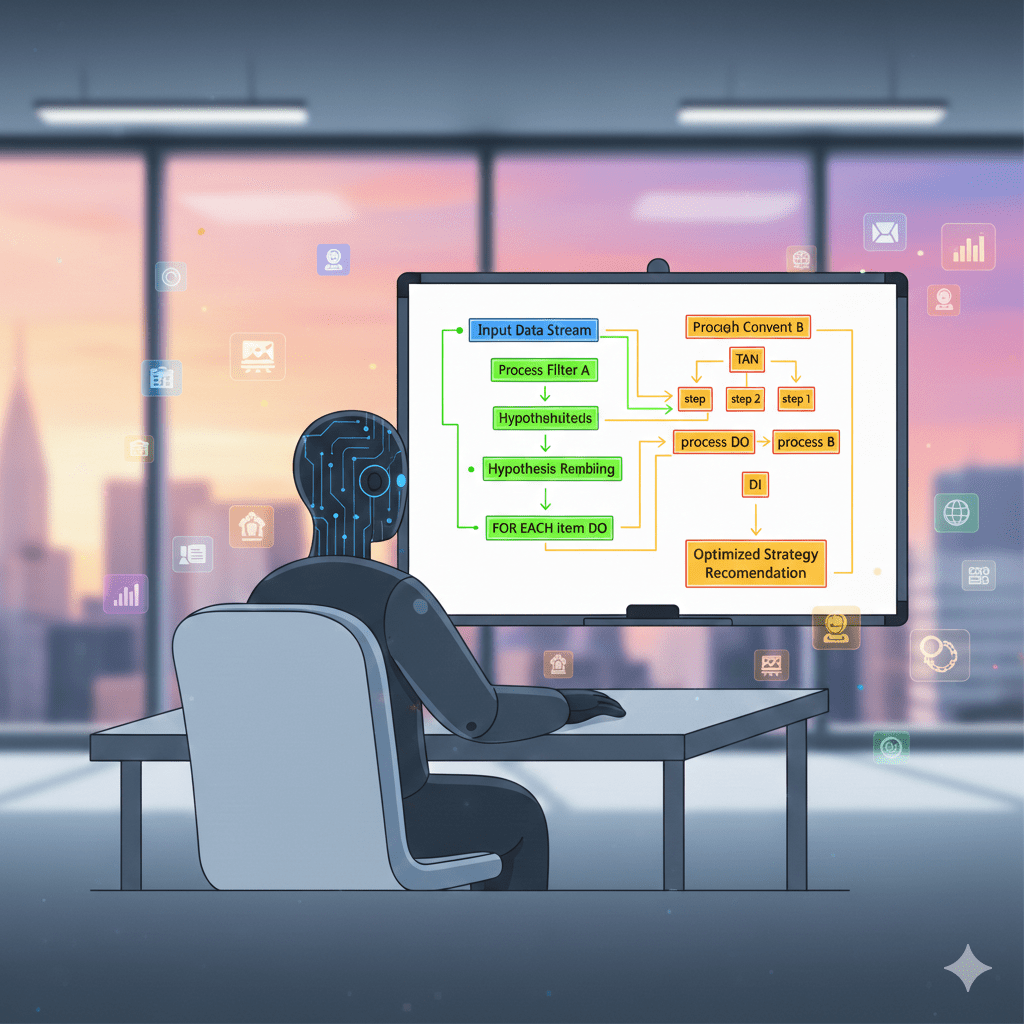

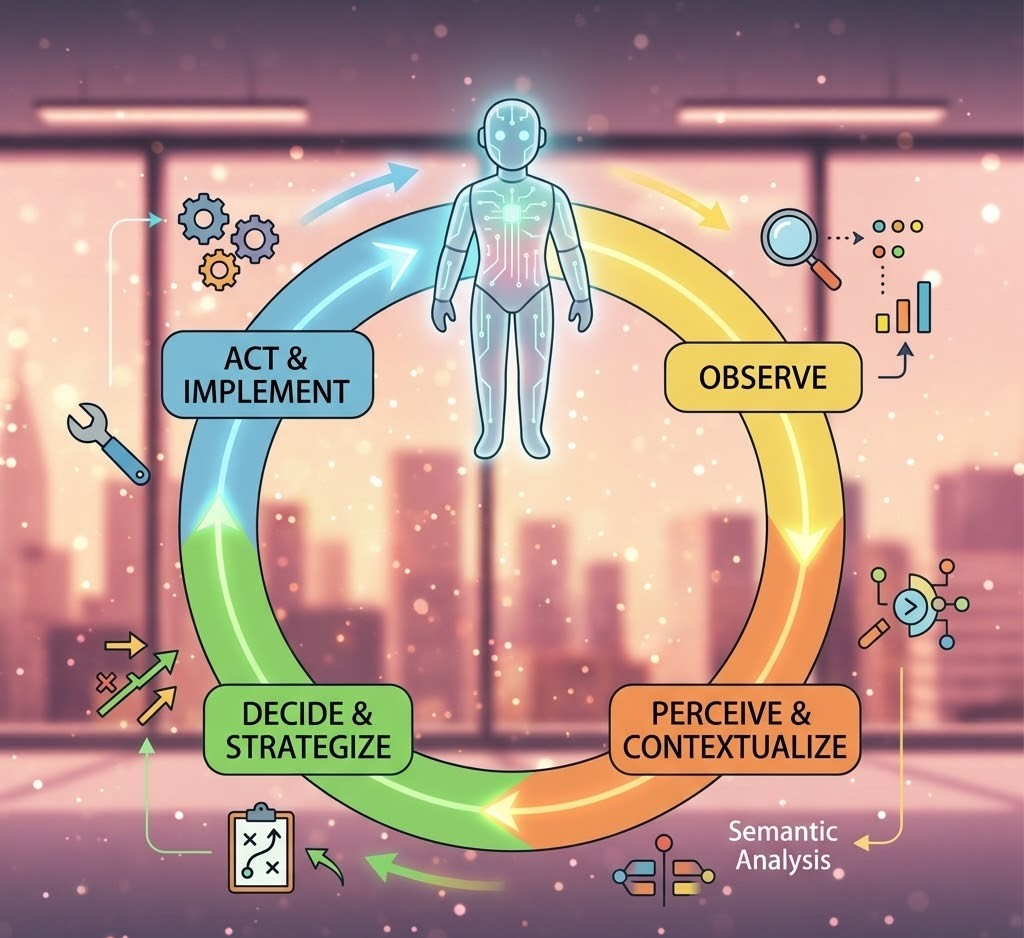

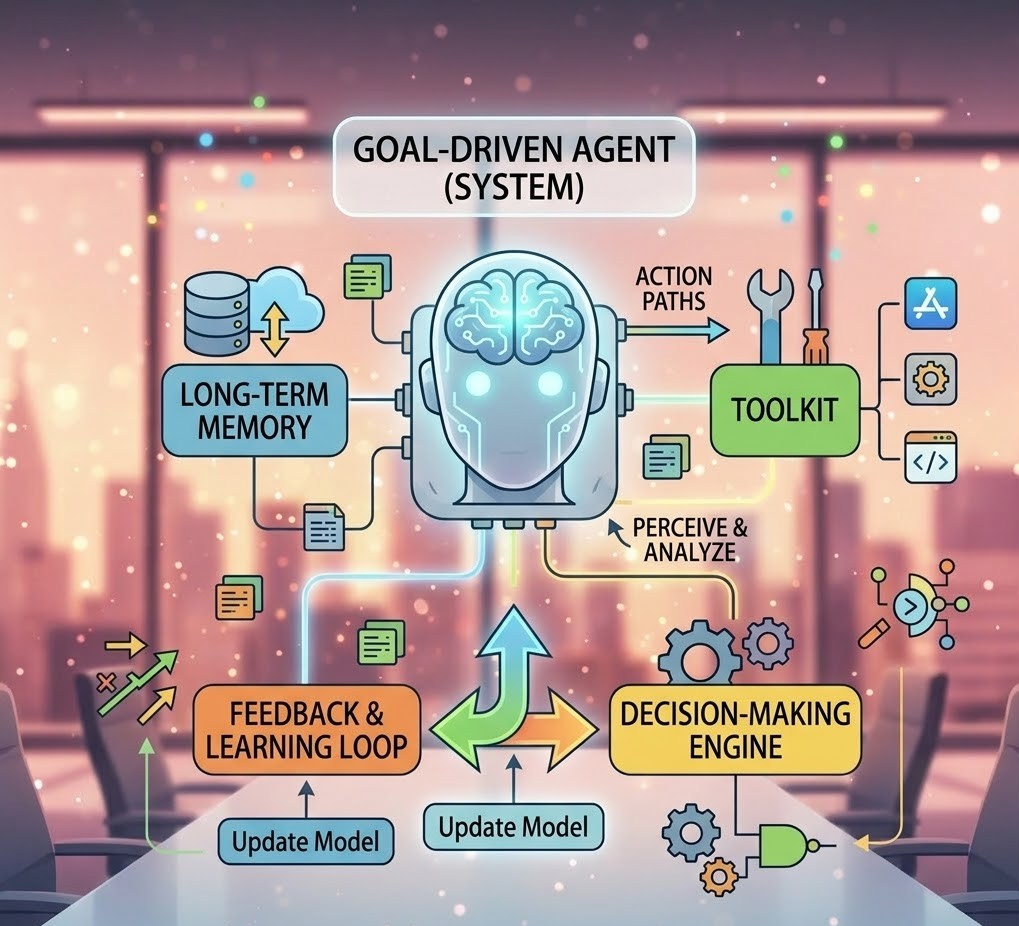

An AI agent is not just a smarter model — it is a system where a model is embedded inside a control loop.

That loop:

- Observes information

- Decides what to do

- Takes action using tools or systems

- Checks the outcome

- Adjusts its next step

In this arrangement, roles become clear:

- The LLM understands language

- The agent owns decisions and actions

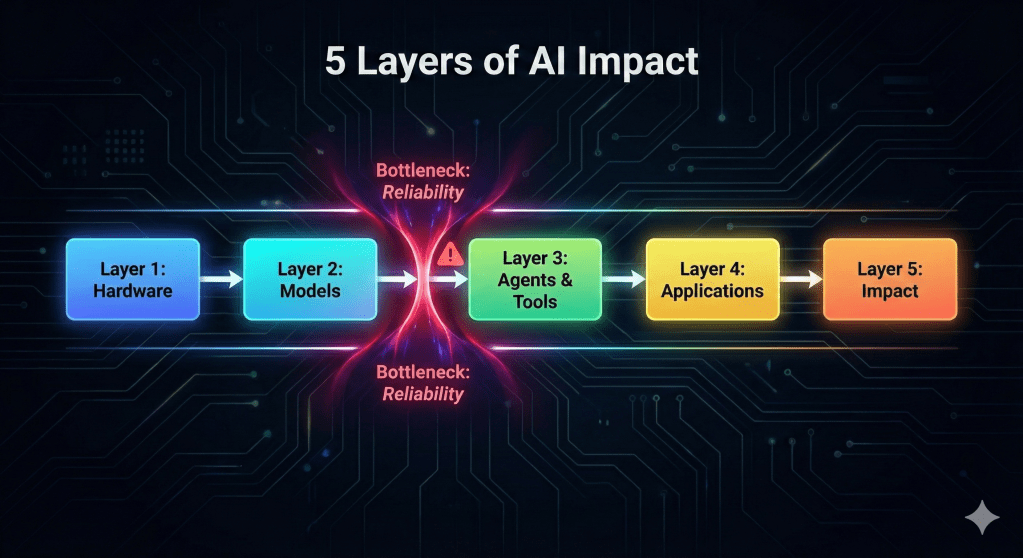

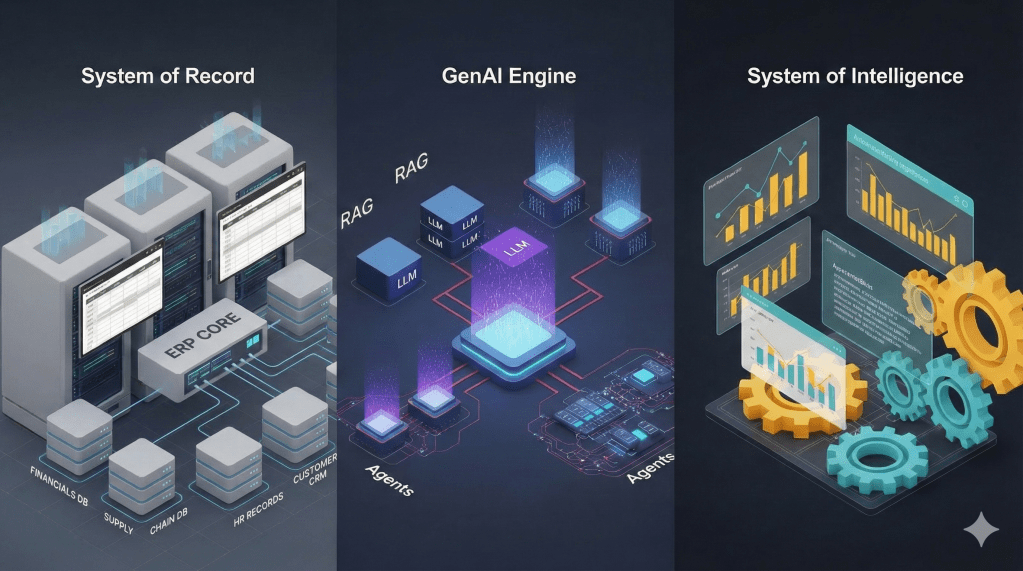

This is when I was able to realized the importance of various concepts I kept reading and hearing through podcasts played an important role in building full AI system. I was able to understand why adding tools, memory, and feedback suddenly makes AI systems feel more capable, not because the model changed, but because cognition was introduced at the system level.

With this in mind, I again started wondering how closely this maps to human thinking — and whether humans use a similar separation between fast understanding and deliberate control.

System 1 and System 2 (How Humans Think)

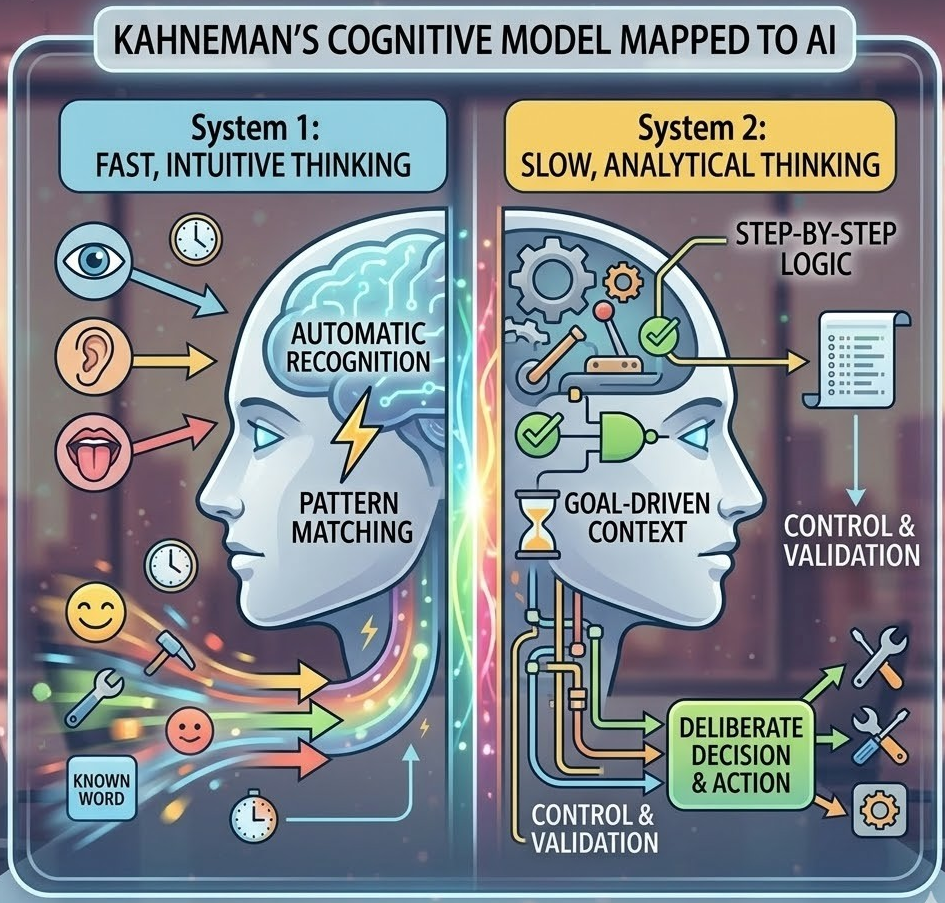

To make sense of this comparison, it helped to borrow a well-known model from psychology that explains how humans think at different speeds.

Psychologist Daniel Kahneman described two ways humans think:

System 1 – Fast Thinking

- Automatic

- Intuitive

- Pattern-based

Example: Instantly recognizing a familiar face.

System 2 – Slow Thinking

- Deliberate

- Logical

- Effortful

Example: Carefully solving a math problem.

Mapping This to AI

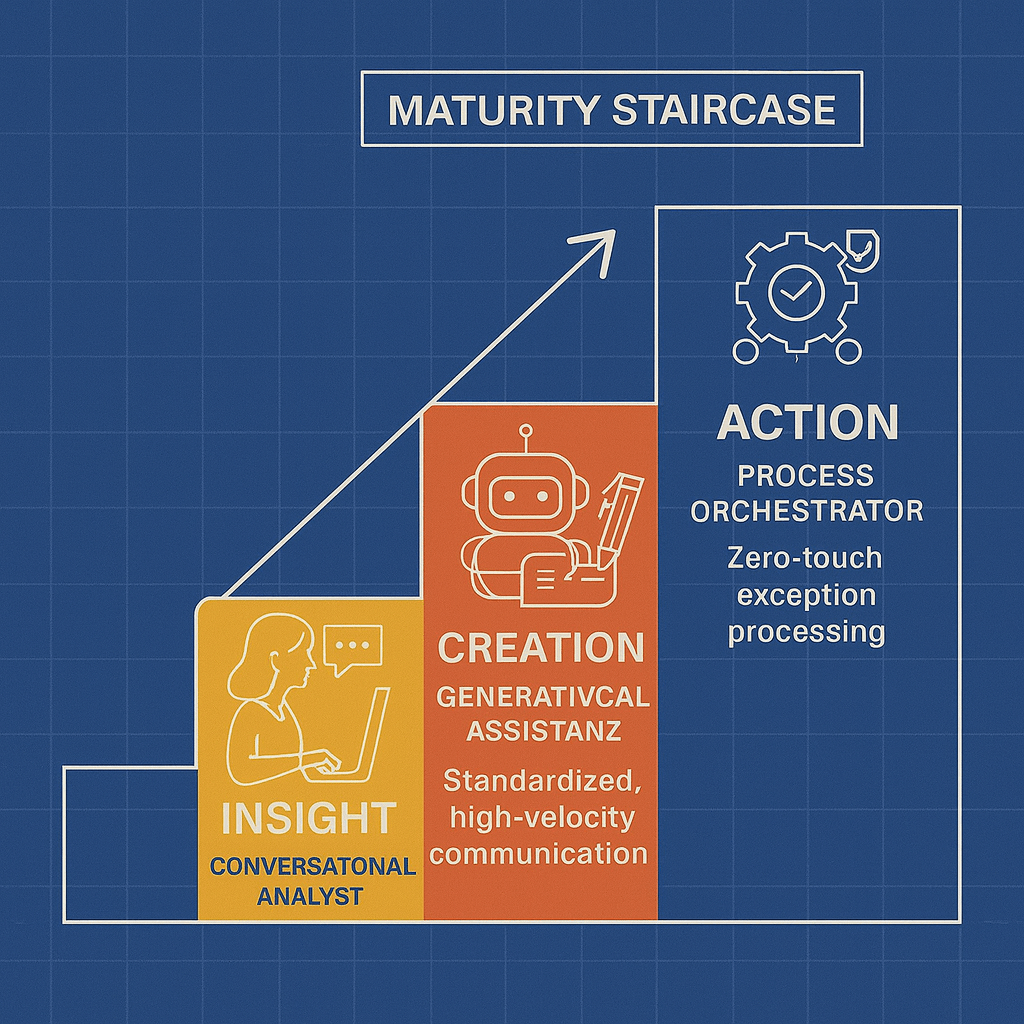

- LLMs behave like System 1 — fast, fluent, intuitive

- Agents behave like System 2 — slow, deliberate, controlling decisions and actions

This mapping helped me clarify why agents feel qualitatively different from standalone models — they introduce control, not just intelligence. That control is what allows systems to pause, decide, and act intentionally.

Conclusion: In AI Systems Cognition Is Architectural

Bringing these ideas together helped solidify the story so far: better models improve understanding, but better architecture enables cognition.

This part reinforced a key insight for me: cognition does not emerge automatically from better reasoning. It emerges from architecture — from systems that can observe, decide, act, and learn.

In the final part, I will share my understanding around why humans still outperform AI in ambiguity, where agents fall short of human cognition, and why this does not diminish the value of today’s AI systems.

Author’s Note: AI-assisted writing tools were used to support the creation of this post. All concepts, perspectives, and the underlying thought process originate from me; the AI served only as a drafting and refinement aid.