Executive Summary:

- AI systems accumulate hidden “sprawl” across tools, prompts, workflows, and context.

- Sprawl increases cost, risk, and operational friction.

- A modular, governed architecture reduces chaos and improves reliability.

- Treat sprawl as a first‑class design problem, not an afterthought.

Introduction

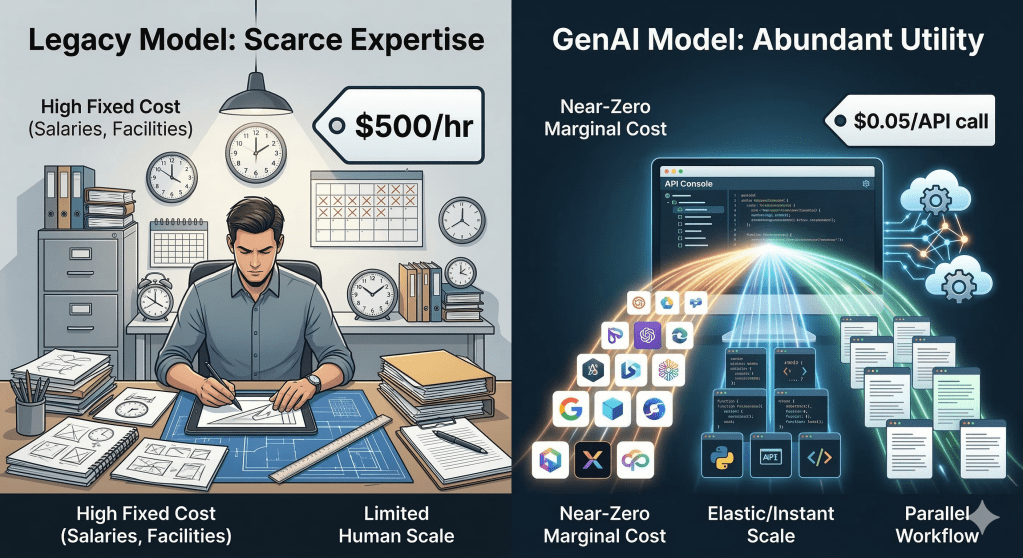

Enterprises are increasingly trying to move fast in adoptic Generative AI and Agentic AI solutions and they are doing so rightly. The reasons are,

- The opportunity is real: automation at scale, faster decision-making, and entirely new operating models.

- The prolifiration and maturing of tecnology and capabilities around Generative and Agentic AI space

At the same time there is new set of challenges that are emerging from early adopters:

Agentic AI, if left ungoverned, will create the next wave of enterprise tech debt and there is high possibility it will be faster than any prior paradigm.

What we are beginning to see is not just experimentation, but uncontrolled proliferation. The industry has started to call this “Sprawl.” and there has been many discussions, research and solutions being proposed to address the challenge.

In this article wanted to briefly introduce the readers to the concept of sprawl in Agentic AI adoption, the various types of sprawl, its impact and some suggestions on how it can be handled.

What is “Sprawl” in Agentic AI?

In simple terms, sprawl is what happens when innovation and adoption outpaces control.

In Agentic AI, it manifests as:

- Too many agents

- Too many tools and integrations

- Too many models

- Too many versions of prompts, workflows, and data pipelines

—all built independently, without a common governance, principles and unifying architecture.

This can result in introducing fragmentation, inconsistency, and risk.

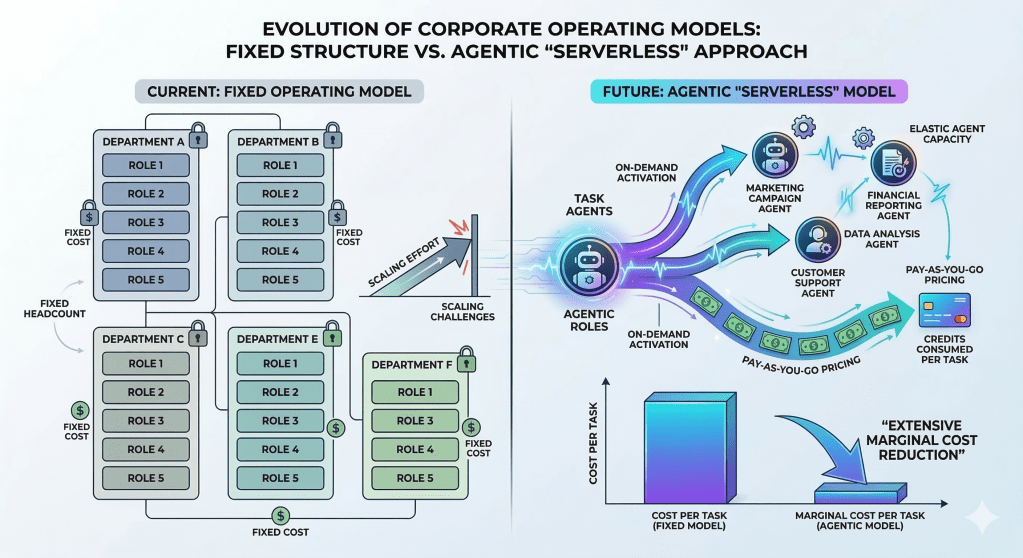

While sprawl is not new to enterprise technology adoption, the pace at which Agentic AI can proliferate is unprecedented. Unlike previous technology paradigm shifts, the low barrier to entry and rapid capability maturation means sprawl can become unmanageable at scale far more quickly—making early governance not just prudent, but essential.

The Types of Sprawl

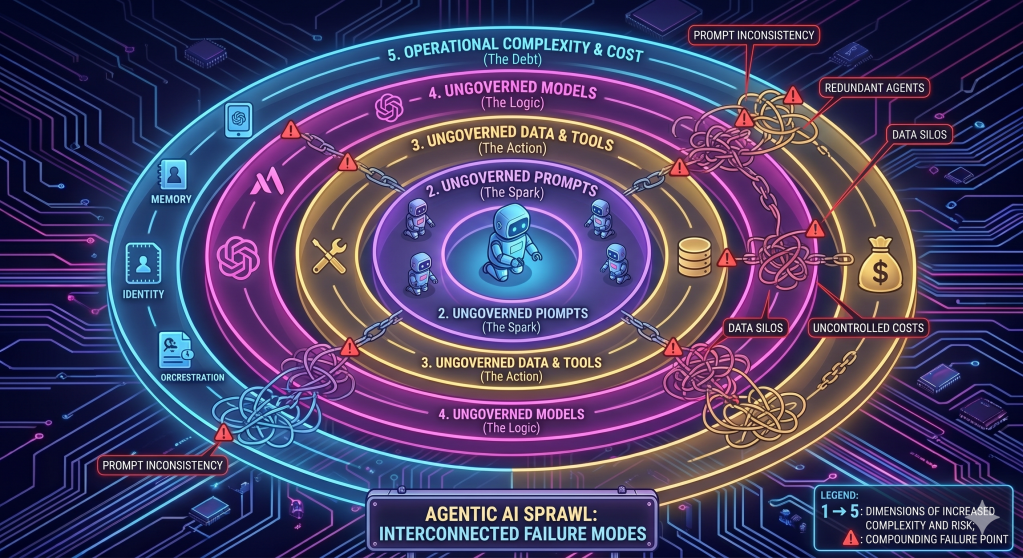

Before diving into talking about the various types of sprawl in the Agentic AI space, it is good to understand the Anotomy of an Agent. As shown in the below image an Agent sits at the middle of universe of capabilities. It expands outward through the tools it uses, the models it runs on, the prompts that guide it, the data it consumes, and finally the operational concerns of memory, identity, orchestration, observability, and cost.

Each ring represents a dimension where uncontrolled growth compounds the ones before it.* An ungoverned agent spawns ungoverned tool integrations. Ungoverned tools expose ungoverned data. Ungoverned data creates ungoverned cost and compliance risk.

Understanding sprawl this way makes one thing clear: these are not isolated problems—they are interconnected failure modes. Addressing only one or two of them is insufficient.

In this section we will cover the various types of Sprawl across the multiple dimensions.

1. Agent Sprawl

Cause :

Teams rapidly build agents for similar use cases without coordination.

Real-world pattern: Customer service, sales, and operations teams each deploy their own “assistant” with overlapping responsibilities.

Impact:

- Duplicate capabilities

- Inconsistent outcomes

- No clear ownership or lifecycle

2. Tool and Integration Sprawl

Cause :

Agents directly integrate with enterprise systems using inconsistent approaches.

Real-world pattern: Some agents call APIs directly, others use middleware, and some embed credentials in code.

Impact:

- Security exposure

- Tight coupling to backend systems

- High maintenance overhead

3. Model Sprawl

Cause :

Different teams adopt different models without alignment.

Real-world pattern: Multiple LLM providers and open-source models used for similar workloads.

Impact:

- Inconsistent responses

- Cost inefficiency

- Compliance risks (data handling, residency)

4. Prompt Sprawl

Cause :

Prompts evolve independently with no versioning or validation.

Real-world pattern: Multiple prompt variations exist for the same use case, with no clarity on which is production-grade.

Impact:

- Unpredictable behavior

- Difficult debugging

- No auditability

5. Data and Context Sprawl

Cause :

Uncoordinated data usage across RAG systems and vector stores.

Real-world pattern: Same datasets embedded multiple times with different configurations.

Impact:

- Inconsistent answers

- Increased cost

- Data governance gaps

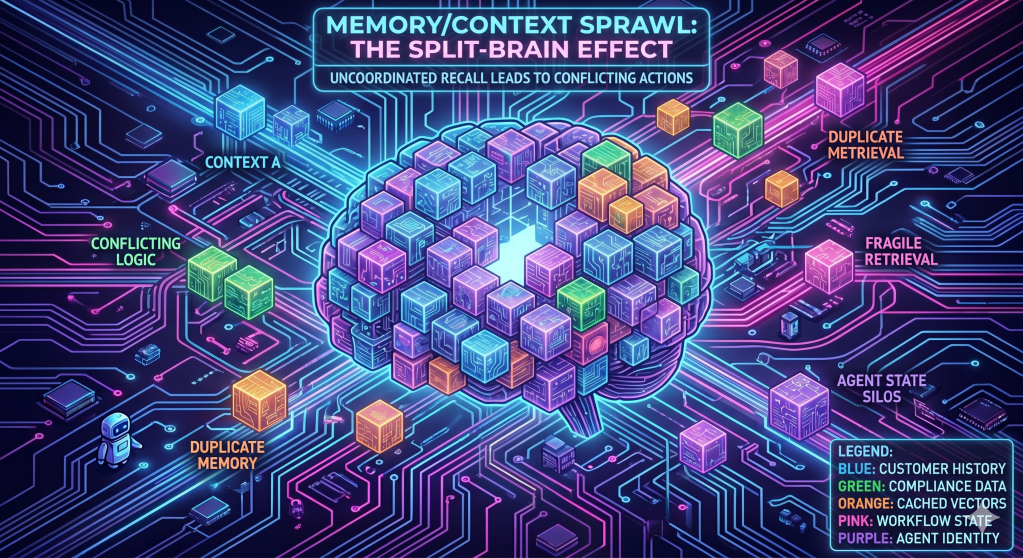

6. Memory Sprawl

Agents maintain fragmented memory across multiple stores.

Impact:

- Conflicting context

- Privacy risks

- Difficult to trace decision logic

7. Identity and Authorization Sprawl

Cause :

No unified identity model for agents and tools.

Real-world pattern: Agents using ad hoc credentials or inconsistent authentication mechanisms.

Impact:

- Security vulnerabilities

- No enforcement of least privilege

- Limited auditability

8. Workflow and Orchestration Sprawl

Cause :

Multiple orchestration patterns emerge across teams.

Impact:

- Lack of reuse

- Difficult troubleshooting

- Inefficient execution

9. Observability and Governance Sprawl

Cause :

Monitoring and control are fragmented across tools.

Impact:

- Limited visibility

- Slow incident resolution

- Compliance exposure

10. Cost Sprawl

Cause :

AI costs grow without clear ownership or optimization.

Impact:

- Budget overruns

- No cost attribution

- Inefficient usage patterns

The Core Issue: Decentralized Innovation Without Central Control

None of the above is surprising.

Agentic AI lowers the barrier to building intelligent systems. That is its strength—but also its risk.

Without a central architecture and control plane, every team optimizes locally, and the enterprise suffers globally.

The Consequence: Silent, Compounding Tech Debt

This is where the real risk lies.

Unlike traditional systems, Agentic AI systems are probabilistic, dynamic, and interconnected. When sprawl sets in:

- Fixing behavior becomes harder over time

- Security gaps multiply silently

- Costs compound without visibility

- Governance becomes reactive instead of proactive

This is not immediate failure—it is slow degradation.

And by the time it is visible, the cost of correction is significantly higher.

The Enterprise POV: Governance Must Come First, Not Later

There is a common approach being adopted today:

> “Let teams experiment first, we will standardize later.”

This approach worked (to some extent) in earlier paradigms.

It will not work for Agentic AI at scale.

Why?

- Agents make decisions, not just execute code

- They interact with sensitive systems and data

- They evolve through prompts, memory, and context

Retrofitting governance later is significantly harder and more expensive.

What Must Be Put in Place Upfront

To avoid long-term tech debt, enterprises must establish the following from the start:

1. Architecture Principles

- Define a standard architecture for agents, tools, memory, and models

- Introduce a central control plane (platform approach)

2. Design Principles

- Reuse over duplication (skills, tools, workflows)

- Loose coupling via controlled interfaces

- Policy-driven access to systems and data

3. Governance Framework

- Agent registration and lifecycle management

- Model and prompt governance

- Data usage and lineage controls

4. Security and Identity Model

- Identity for every agent

- Fine-grained authorization for tool access

- Secure agent-to-agent communication

5. Operational Controls

- Unified observability (logs, traces, metrics)

- Cost tracking and attribution

- Runtime policy enforcement

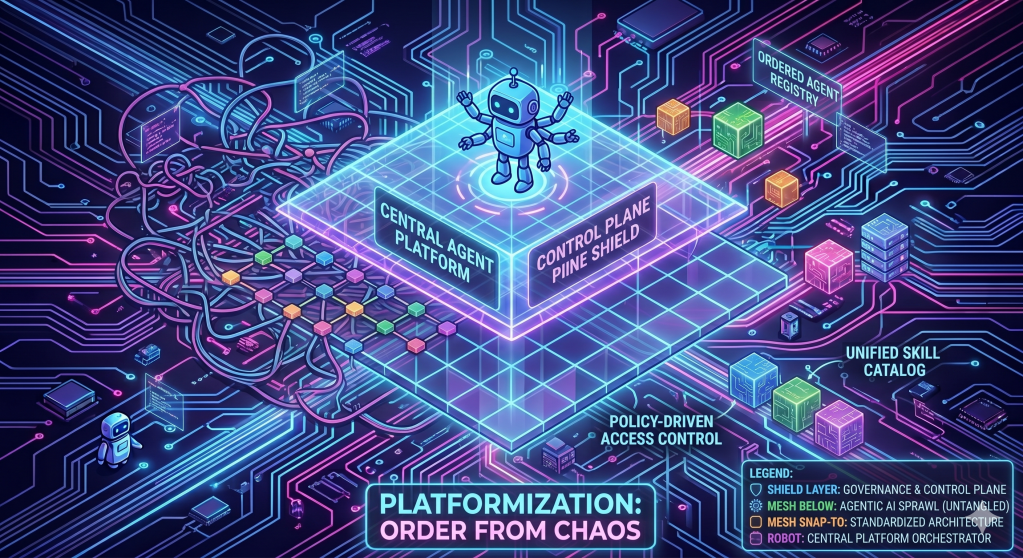

The Practical Direction: Platformization

The direction is becoming clear across the industry:

Enterprises need an Agent Platform—not just agent frameworks.

A platform approach provides:

- Standardization

- Governance

- Reuse

- Observability

- Security

—while still enabling teams to innovate.

Final Thoughts

Agentic AI is not just another technology layer—it is a new execution model for enterprises.

With that comes a responsibility:

Design for control before scale.

Organizations that ignore sprawl will move fast initially—but will slow down later under the weight of complexity, risk, and cost.

Organizations that address it early will build systems that are not just powerful, but sustainable and scalable.

The choice is not between speed and governance.

The real choice is whether to pay the cost now—or pay significantly more later.

Author’s Note: AI-assisted writing tools were used to support the creation of this post. All concepts, perspectives, and the underlying thought process originate from me; the AI served only as a drafting and refinement aid

Published by Sri Rajalingam

CTO, Entrepreneur, Technology Evangelist & Trainer focused on building companies and helping Enterprises Apply and Adopt AI and Cloud to that cna help them to create real, measurable impact. View all posts by Sri Rajalingam