From a DeepSeek Article to trying to Understand Semantics vs Reasoning Cognitive concepts in AI

Introduction:

This first part captures the beginning of my thought journey. What started as reading an article about DeepSeek’s long-text technique slowly turned into a more fundamental question about what we really mean when we say an AI system “understands.”

A Simple Article That Led to a Big Question

I recently read an article about a research study that questioned a technique used by DeepSeek to help AI models read very long texts. The idea sounded impressive: compress large amounts of text so an AI can process more information at once.

But the researchers found something surprising.

The AI seemed to perform well not because it truly understood the text, but because it relied on patterns it had seen before. When those patterns were disrupted, the model struggled badly.

Even though I already had a working understanding of how LLMs work and Transformer architectures function, something about this finding triggered my interest to learn deeper. If these models were struggling the moment patterns broke down, what exactly were they doing when we say they “understand” text?

This thought triggered a deeper line of questioning in my mind — not about DeepSeek specifically, but about how we interpret progress in GenAI as a whole.

That curiosity naturally led me to ask:

Are modern AI systems really understanding, or are they just very good at guessing?

Once that question formed, it became clear that I needed to first separate two ideas that are often mixed together: semantic understanding and cognitive capability.

Semantic Understanding (Knowing What Something Means)

The first concept I needed clarity on was semantic understanding — a term frequently used but rarely unpacked.

Semantic understanding simply means understanding the meaning.

In everyday language:

It answers the question: “What does this mean?”

Large Language Models (LLMs) are exceptionally strong in this area.

They can:

- Read a paragraph and explain it

- Summarize documents

- Translate languages

- Recognize relationships between ideas

For instance, when an AI explains a legal document or summarizes a report, it is exercising semantic understanding. In many ways, this mirrors how humans comprehend words and sentences.

However, as I reflected on the DeepSeek article, an important limitation became obvious.

Semantic understanding stops at meaning.

It explains what is being said, but it does not decide what should happen next.

That realization naturally pushed me toward the next question: if understanding meaning is not enough, what role does reasoning actually play?

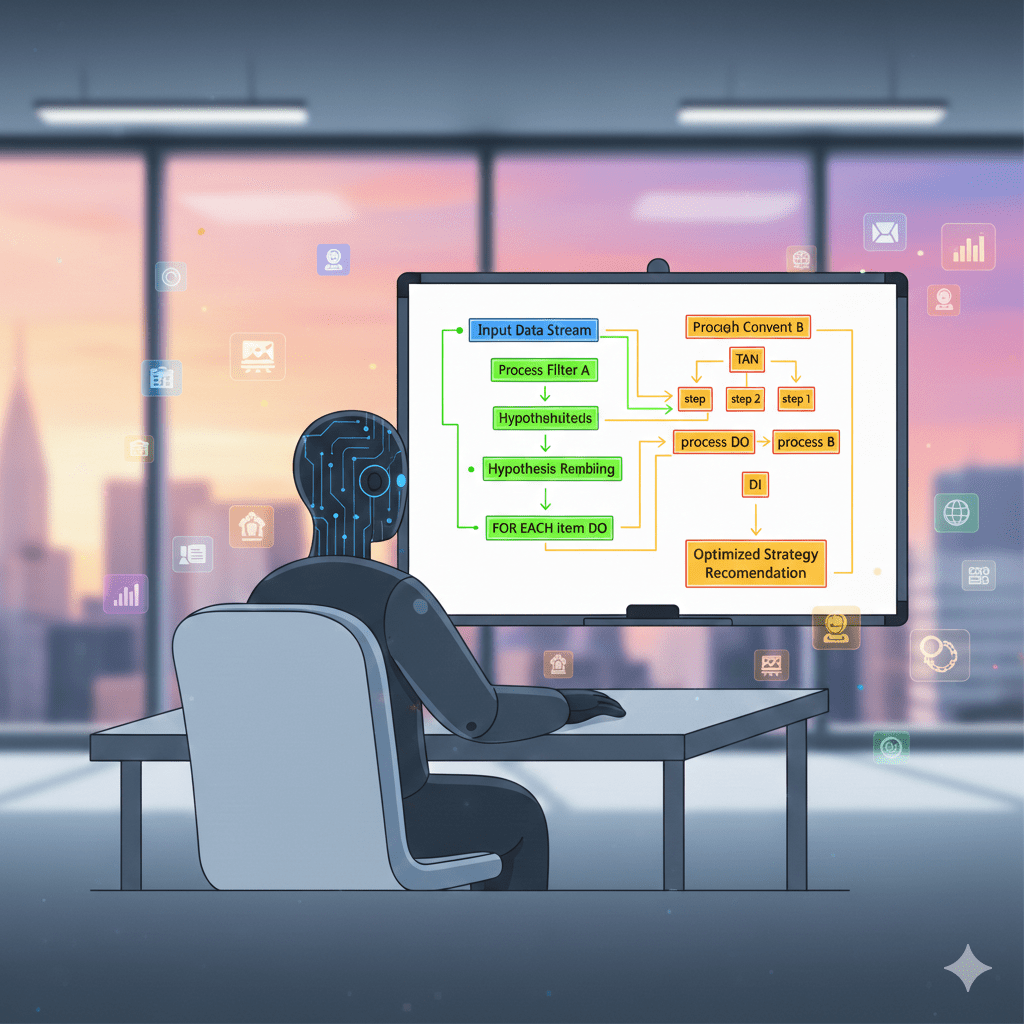

Reasoning Models (Thinking Better, Not Acting Better)

At this point, my attention shifted to reasoning models, often marketed as “thinking” AI.

These models are designed to show their work. They break problems into steps, apply logic, and produce more structured explanations.

On the surface, this feels like a major leap forward — and in many ways, it is.

But when I looked more carefully, I noticed that reasoning models still revolve around a single question:

“What is the best response to this input?”

Even with better logic, they still do not:

- Choose goals (which is critical for decision-making — without goals, outputs remain just well-organized facts)

- Take responsibility for outcomes

- Act independently in the world

So while reasoning models think better, they don’t actually decide.

This insight clarified something important for me: reasoning improves semantic structure, but it still operates within the same boundary.

That naturally led to the next question — if neither understanding nor reasoning decides action, then what does?

Part 1 Conclusion: A Boundary Becomes Visible

By the end of this first part, one boundary had become very clear to me.

Understanding meaning and reasoning about it — even in sophisticated ways — does not automatically lead to decision-making or action. Something else is required.

In the next part, I will share my learning about the missing layer: cognitive capability, and why AI agents represent an important architectural shift rather than just a smarter model.