The Impact Layers — Why AI Models Alone Don’t Deliver Value

Introduction

This is the third post in the Applied AI Thoughts for Realization series.

In the first post, Why AI Feels Overwhelming, we tackled the problem of “AI Fatigue” and the trap of Tactical Thinking—chasing the latest tools without a plan. We argued for a shift to Structural Thinking, focusing on the architecture of problems rather than just the features of models.

In the second post, A Simple Mental Model — 4 Pillars, we established the horizontal dimension of our mental model. We categorized the AI landscape into 4 Domain Pillars—Consumer, Enterprise, Science, and Physical AI—and used the “Engine vs. Vehicle” analogy to show why a “Sports Car” strategy (Consumer) fails when you need a “Cargo Train” solution (Enterprise).

With the understanding of the 4 Pillars of different AI ecosystem and how the application of AI varies across the pillars its critical to understand what is required to deliver value in ech of the pillars. This brings us to the vertical dimension of our framework: The Impact Layers which play critical role in delivering value for each pillar.

Why a Model is not Everything in a AI Solution?

There is often a disconnect when we try to adopt AI. On one hand, we see headlines about models acing exams and writing code. It makes us feel model is what you need to role out a AI driven solution. On the other hand, when Enterprises or Consumers try to start deploying AI based solutions, they end up realizing its much more than just having a model. Enterprises have to address multiple challenges since they face multiple challenges like:

- The solution is not responsive and impacts user experience.

- Does not produce consistent or reliable results.

- It’s not quite fast enough, or it produces results that need a second look.

- Integration with existing systems is complex and time-consuming.

- The cost of running the solution at scale becomes prohibitively expensive.

- It works in demos but fails on real-world, messy data.

- Users don’t trust the output enough to act on it without verification.

- Models occasionally “hallucinate” or make confident errors.

The question then arises Why is there such a gap between the Intelligence we hear and read about around the Model and realizing the actual Capability we like to experience?

The answer lies in understanding that a “Model” is not the final “Product” or “Solution”.

A model is just raw potential—like a powerful engine sitting on a factory floor. To turn that potential into actual value, it is dependent on several layers of translation. It needs to be hosted on hardware, connected to tools, wrapped in an interface, and integrated into a workflow.

If any one of those layers is weak, the entire experience fails.

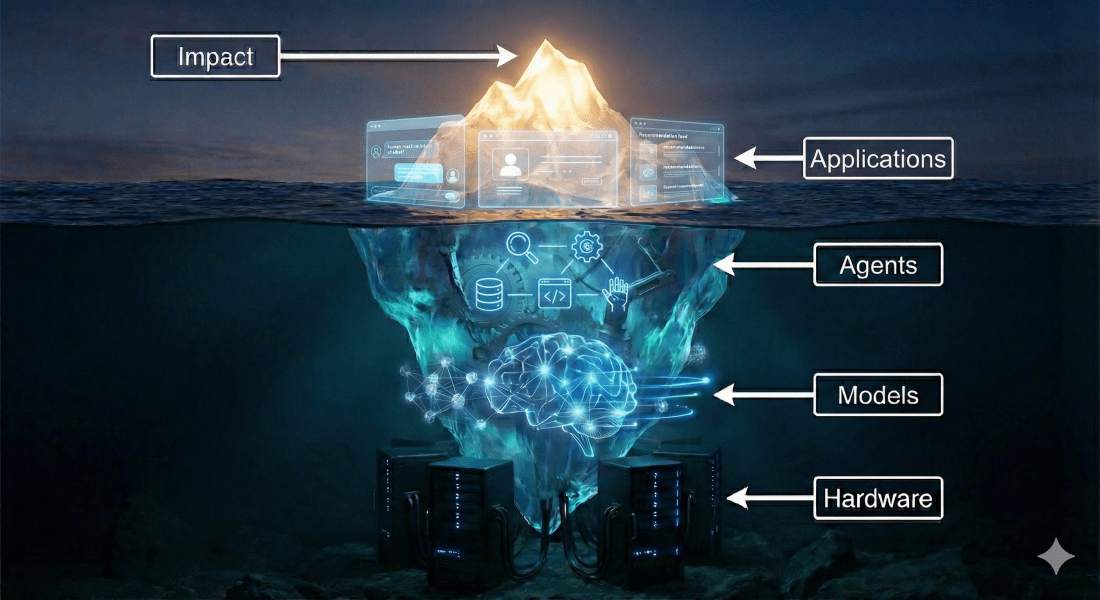

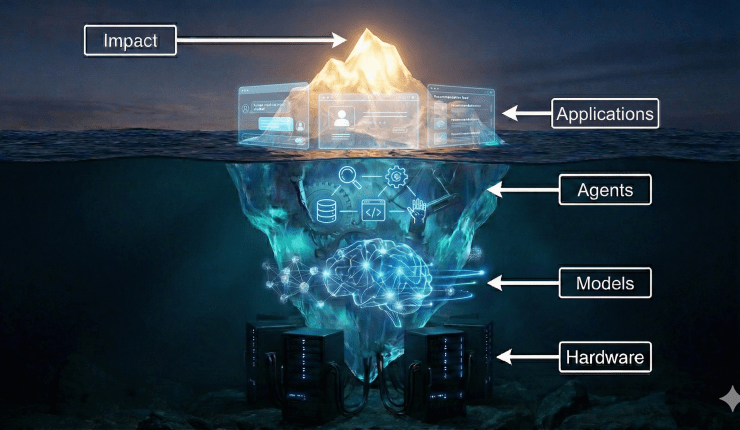

The “Iceberg” Theory of AI

A helpful way to visualize this is the Iceberg Theory of AI.

When you interact with an AI application—whether it’s a chatbot, a recommendation engine, or a robot—you are only seeing the tip of the iceberg.

- Above the Water (Visible): The Application Layer. This is the user interface, the buttons, and the response time. This is what we judge.

- Below the Water (Invisible): The massive infrastructure that supports that tip. This includes the Agents (logic), the Models (intelligence), and the Hardware (compute).

Most of the hype focuses on the “Model” layer deep underwater. But most of the failure happens in the layers between the model and the user. To understand why an AI project succeeds or fails, we need to look below the surface and examine the 5 Layers of AI Impact.

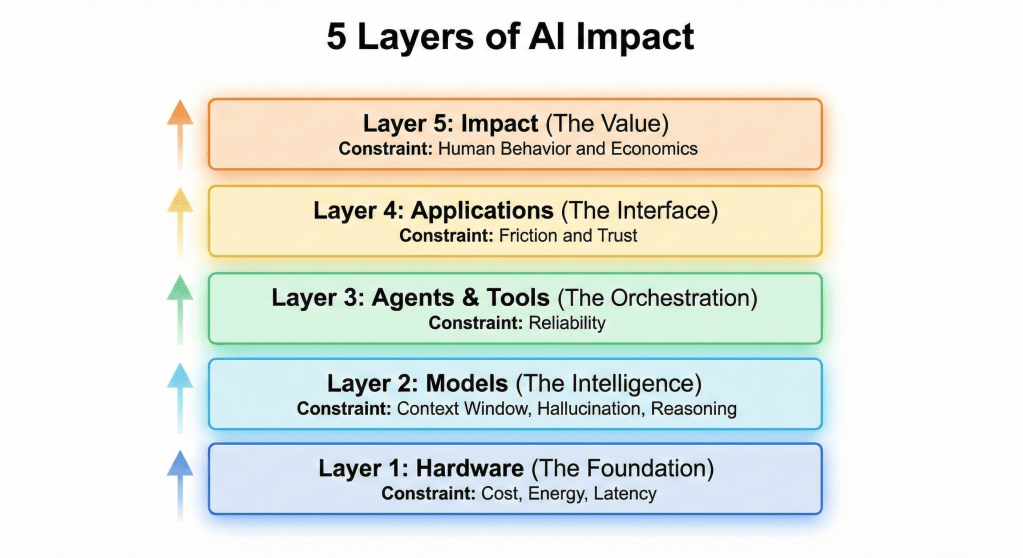

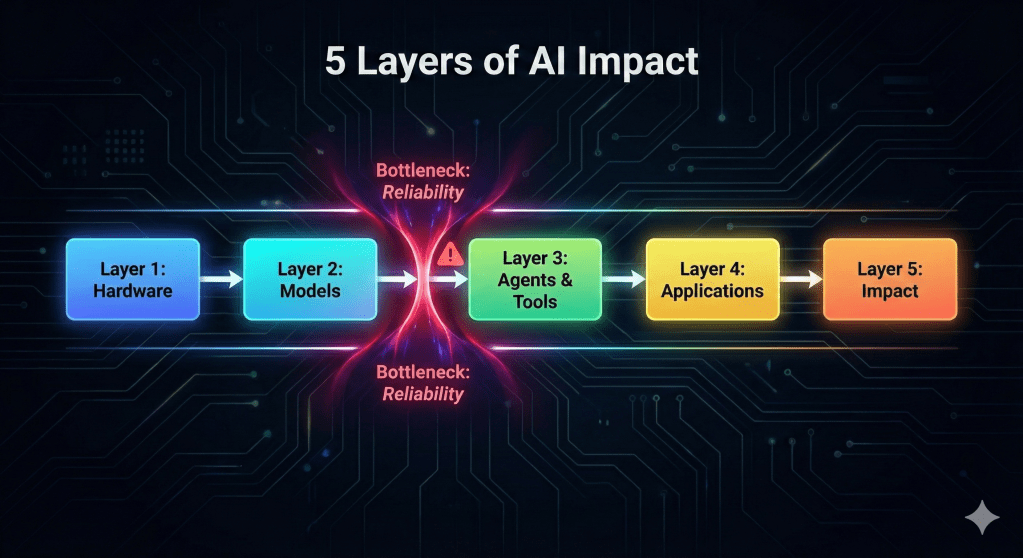

The 5 Layers of AI Impact

Progress in AI doesn’t happen all at once. It moves up this stack, layer by layer.

Layer 1: Hardware (The Foundation)

This is the physical reality of AI. It includes the GPUs (chips) that train models, the data centers that host them, and the edge devices (phones, robots) that run them.

- Why it matters: Hardware dictates feasibility. You might have a brilliant AI model, but if it requires $10,000 of compute per hour to run, it cannot be a consumer product. If it takes 5 seconds to respond, it cannot be a self-driving car.

- The Constraint: Cost, Energy, and Latency.

Layer 2: Models (The Intelligence)

This is what we typically call “AI.” It includes Large Language Models (LLMs), diffusion models (images), and predictive models. This layer provides the raw reasoning and pattern-matching capability.

- Why it matters: Models dictate potential. A smarter model can solve harder problems.

- The Constraint: Context Window (memory), Hallucination (accuracy), and Reasoning capability.

Layer 3: Agents & Tools (The Orchestration)

This is the bridge between thought and action. A model can only output text; an Agent can use that text to call a tool—like searching the web, querying a database, or clicking a button.

- Why it matters: Agents dictate utility. Without this layer, AI is just a chatbot. With this layer, AI becomes a coworker that can book flights, write code to disk, or control a robot arm.

- The Constraint: Reliability. If an agent gets confused and clicks the wrong button, it causes chaos.

Layer 4: Applications (The Interface)

This is the software layer where the human meets the machine. It includes the UI/UX, the workflow integration, and the “vibe” of the product.

- Why it matters: Applications dictate adoption. A powerful agent wrapped in a confusing interface will be ignored. The best AI applications often hide the AI completely (e.g., Netflix recommendations).

- The Constraint: Friction and Trust. Users must feel in control.

Layer 5: Impact (The Value)

This is the final result. It is not software; it is the change in the real world. Does this tool save time? Does it cure a disease? Does it increase revenue?

- Why it matters: Impact dictates sustainability. If an AI project doesn’t generate real value (ROI or societal good), it will eventually be shut down, no matter how cool the technology is.

- The Constraint: Human Behavior and Economics. Just because a tool exists doesn’t mean people will change their habits to use it.

The Bottleneck Theory: Why Progress is Non-Linear

The most important thing to understand about these layers is that they must work in coherence.

We cannot simply “upgrade” one layer and expect the whole system to improve. In fact, the system is always limited by its weakest link.

- Historical Example: In the 1960s, AT&T invented the Picturephone. It was a brilliant Layer 4 (Application) idea. But Layer 1 (Network Bandwidth) wasn’t ready. The product failed spectacularly.

- Current Example: Today, we have incredible Layer 3 (Agent) concepts—AI employees that can do everything. But often, Layer 2 (Model Reliability) isn’t quite there yet; the models still hallucinate occasionally. As a result, the “AI Employee” fails to be reliable enough for critical work.

This interdependence creates a “hurdle for adoption.” You might have the budget and the desire, but if one layer in the stack is immature, your project will stall.

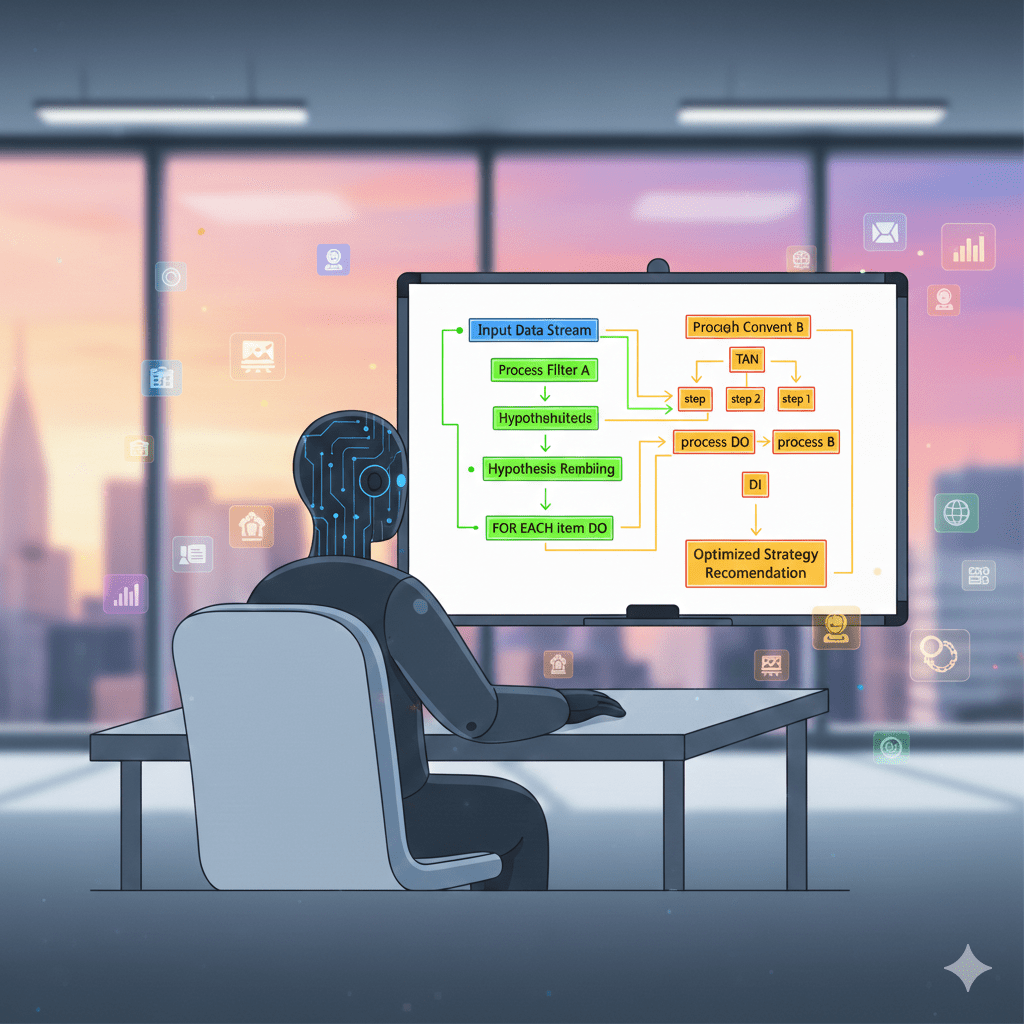

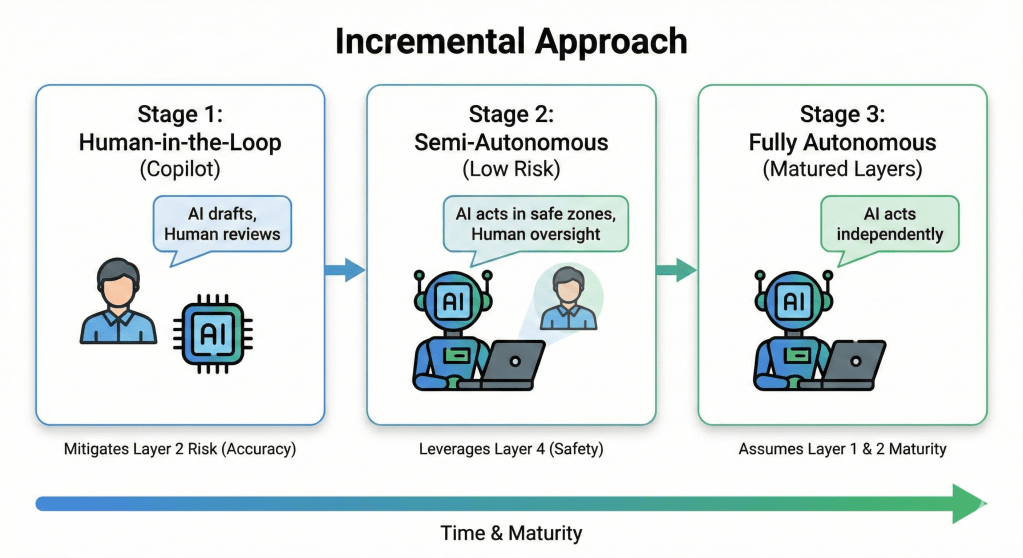

Guidance: The Incremental Approach

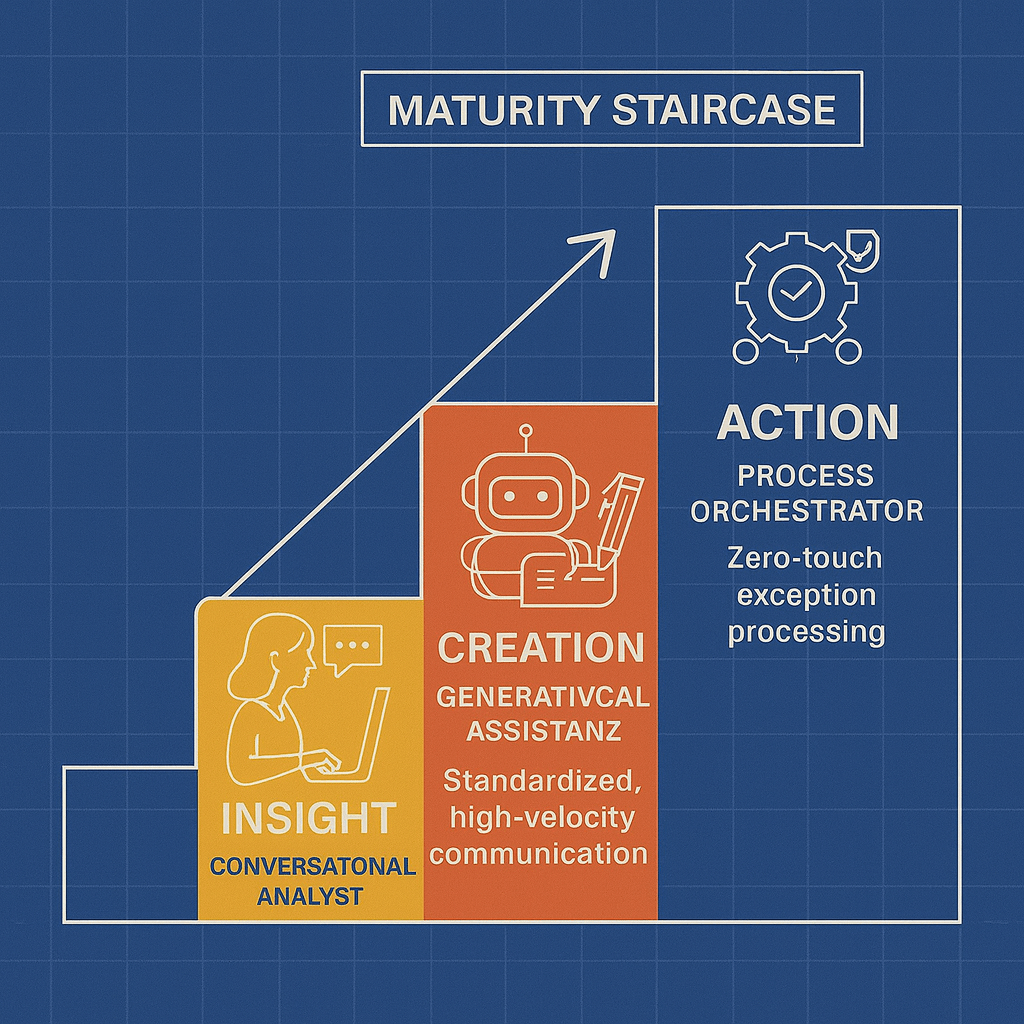

So, how do you build when the stack isn’t perfect? You adopt an Incremental Approach.

Instead of trying to build the “Ultimate AI System” that relies on every layer being perfect, you build for the layers that are ready today.

A sample scenario how to Approach incremental Build:

- Start with “Human-in-the-Loop” (Layer 3 Lite): Don’t try to build fully autonomous agents yet. Build “Copilots” where the AI drafts the work, and a human reviews it. This mitigates the Layer 2 (Accuracy) risk.

- Focus on “Low-Risk” Applications (Layer 4 Safety): Deploy AI in internal brainstorming or draft generation before putting it in front of customers.

- Scale as Layers Mature: As models get cheaper (Layer 1 improves) and smarter (Layer 2 improves), you gradually remove the human guardrails.

Advantages:

- Immediate Value: You get ROI now, rather than waiting 5 years for “AGI.”

- Learning: Your organization learns how to work with AI data and workflows.

- Safety: You avoid catastrophic failures by keeping humans involved.

Disadvantages:

- Maintenance: You have to constantly update your system as the underlying layers change.

- Process Change: It requires changing how people work (training them to use Copilots), which is often harder than just installing software.

By respecting the bottleneck, you build systems that actually work, rather than science fiction that breaks on day one.

Summary

In this post, we explored the vertical dimension of AI execution: the 5 Layers of Impact. We saw how a seemingly simple AI application is actually supported by a complex stack of Hardware, Models, Agents, and Interfaces, and why the “weakest link” in this chain often determines success. But understanding the pillars and layers is only half the picture. In the next post, “How Pillars and Layers Work Together,” we will merge the horizontal Pillars and vertical Layers into a unified perspective. This approach will allow you to predict the behavior, timeline, and constraints of any AI project by understanding how technical layers interact differently across each distinct domain pillars.

Author’s Note: AI-assisted writing tools were used to support the creation of this post. All concepts, perspectives, and the underlying thought process originate from me; the AI served only as a drafting and refinement aid

Previous Post : The Applied AI Thoughts for Realization Blog Post 2